Copyright notice

A

Akrivas, Giorgos

Ed. by Z. Ma

pp. 247-272 Springer, 2006

The semantic gap is the main problem of content based multimedia retrieval. This refers to the extraction of the semantic content of multimedia documents, the understanding of user information needs and requests, as well as to the matching between the two. In this chapter we focus on the analysis of multimedia documents for the extraction of their semantic content. Our approach is based on fuzzy algebra, as well as fuzzy ontological information. We start by outlining the methodologies that may lead to the creation of a semantic index; these methodologies are integrated in a video annotating environment. Based on the semantic index, we then explain how multimedia content may be analyzed for the extraction of semantic information in the form of thematic categorization. The latter relies on stored knowledge and a fuzzy hierarchical clustering algorithm that uses a similarity measure that is based on the notion of context.

@incollection{B3,

title = {Automatic thematic categorization of multimedia documents using ontological information and fuzzy algebra},

author = {Wallace, Manolis and Mylonas, Phivos and Akrivas, Giorgos and Avrithis, Yannis and Kollias, Stefanos},

publisher = {Springer},

booktitle = {Soft Computing in Ontologies and Semantic Web},

editor = {Z. Ma},

volume = {204},

pages = {247--272},

year = {2006}

}Rennes, France Sep 2003

Object detection techniques are coming closer to the automatic detection and identification of objects in multimedia documents. Still, this is not sufficient for the understanding of multimedia content, mainly because a simple object may be related to multiple topics, few of which are indeed related to a given document. In this paper we determine the thematic categories that are related to a document based on the objects that have been automatically detected in it. Our approach relies on stored knowledge and a fuzzy hierarchical clustering algorithm; this algorithm uses a similarity measure that is based on the notion of context. The context is extracted using fuzzy ontological relations.

@conference{C25,

title = {Using context and fuzzy relations to interpret multimedia content},

author = {Wallace, Manolis and Akrivas, Giorgos and Mylonas, Phivos and Avrithis, Yannis and Kollias, Stefanos},

booktitle = {Proceedings of 3rd International Workshop on Content-Based Multimedia Indexing (CBMI)},

month = {9},

address = {Rennes, France},

year = {2003}

}Tenerife, Spain Dec 2001

A system for digitization, storage and retrieval of audiovisual information and its associated data (meta-info) is presented. The principles of the evolving MPEG-7 standard have been adopted for the creation of the data model used by the system, permitting efficient separation of database design, content description, business logic and presentation of query results. XML Schema is used in defining the data model, and XML in describing audiovisual content. Issues regarding problems that emerged during system design and their solutions are discussed, such as customization, deviations from the standard MPEG-7 DSs or even the design of entirely custom DSs. Although the system includes modules for digitization, annotation, archiving and intelligent data mining, the paper mainly focuses on the use of MPEG-7 as the information model.

@conference{C21,

title = {An Intelligent System for Retrieval and Mining of Audiovisual Material Based on the {MPEG-7} Description Schemes},

author = {Akrivas, Giorgos and Ioannou, Spyros and Karakoulakis, Elias and Karpouzis, Kostas and Avrithis, Yannis and Delopoulos, Anastasios and Kollias, Stefanos and Varlamis, Iraklis and Vaziriannis, Michalis},

booktitle = {Proceedings of European Symposium on Intelligent Technologies, Hybrid Systems and their implementation on Smart Adaptive Systems (EUNITE)},

month = {12},

address = {Tenerife, Spain},

year = {2001}

}Amsaleg, Laurent

New Orleans, LA, US Dec 2023

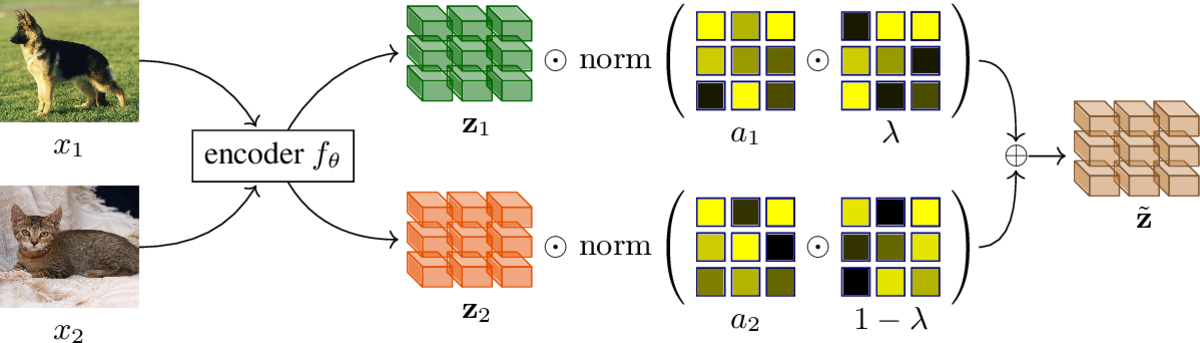

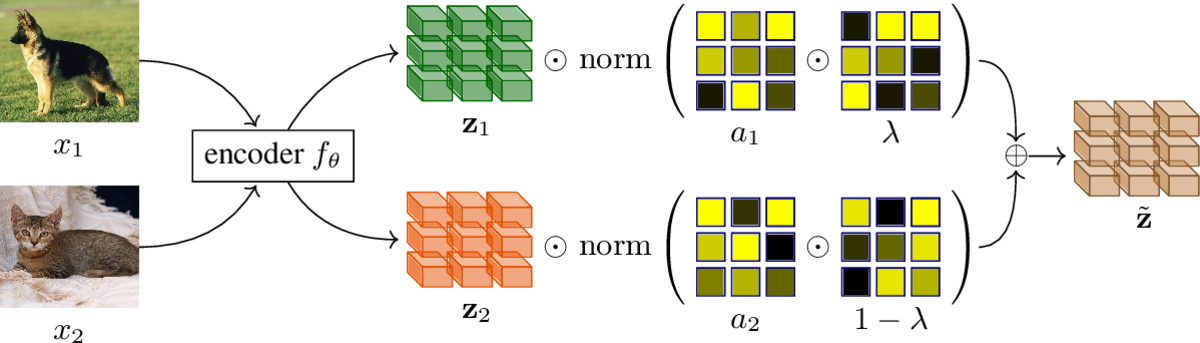

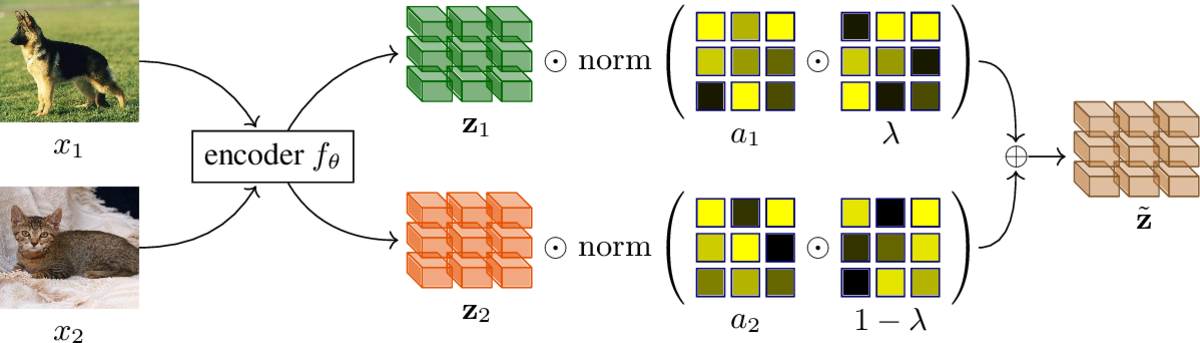

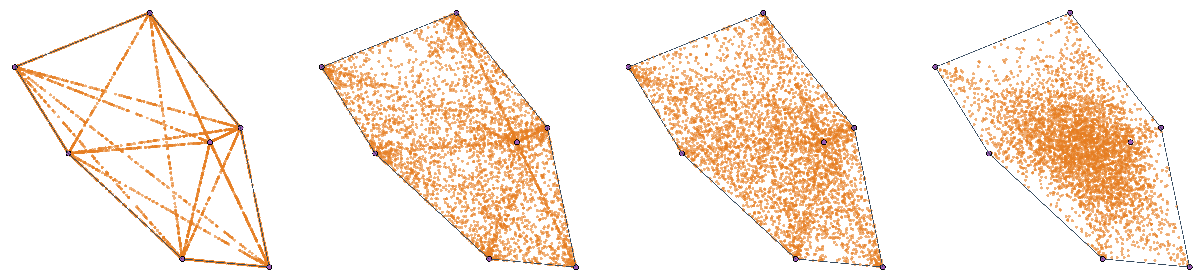

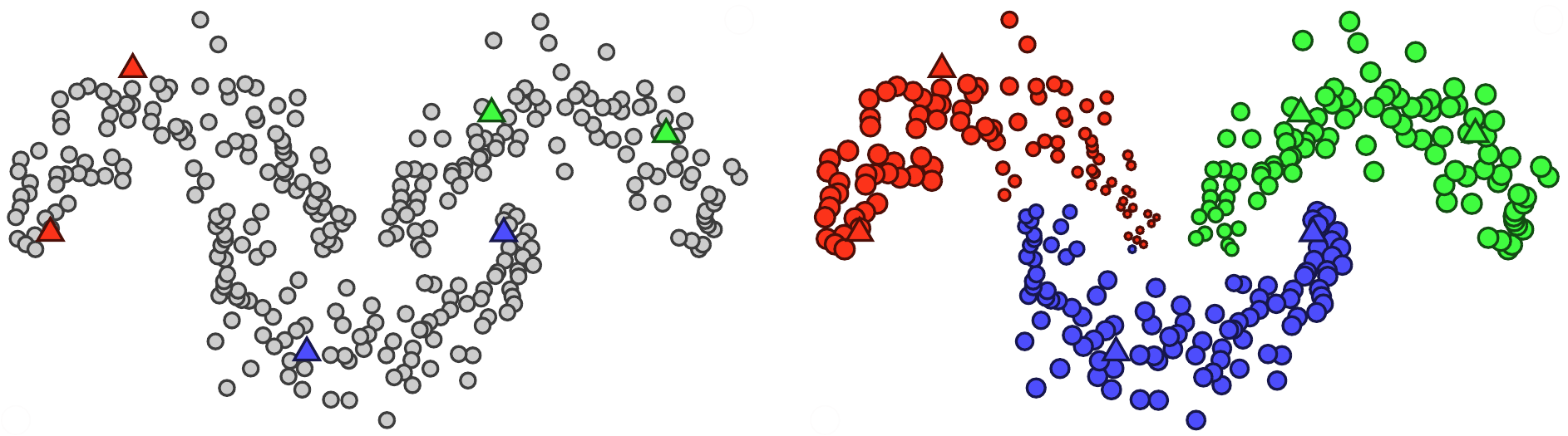

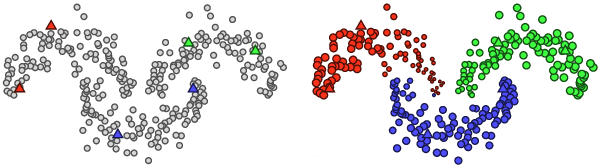

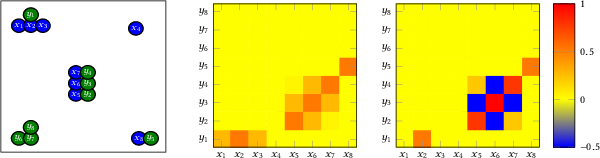

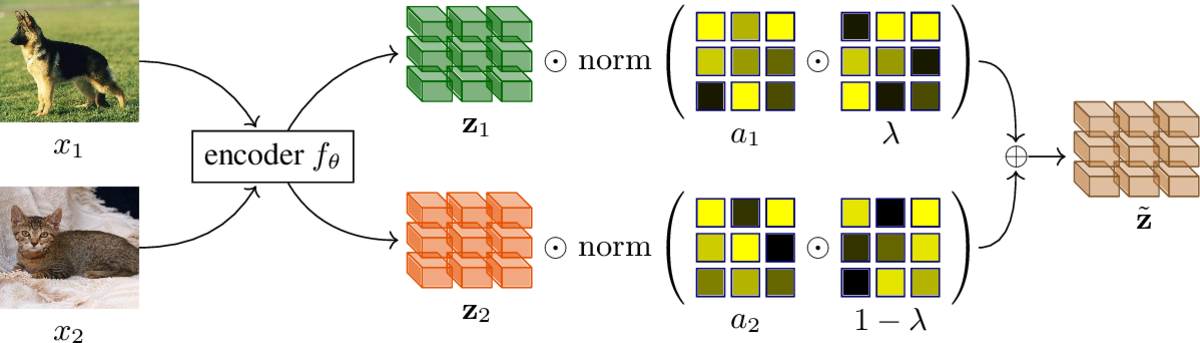

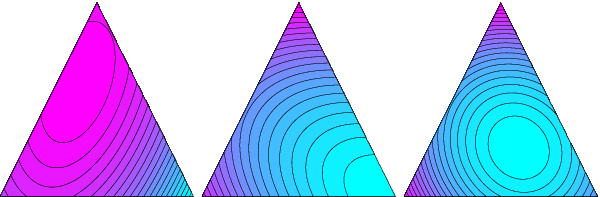

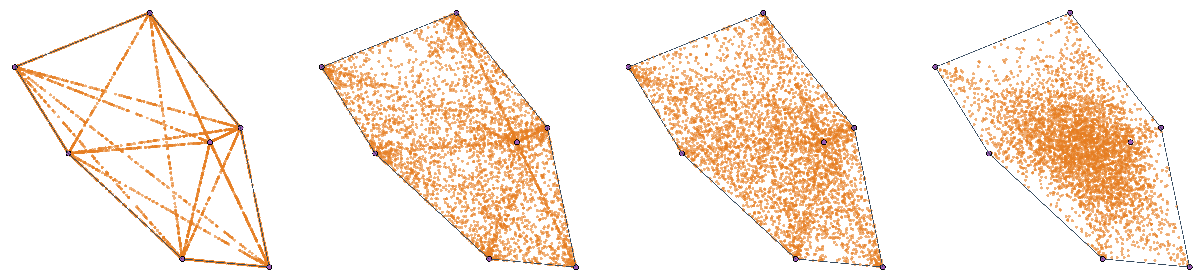

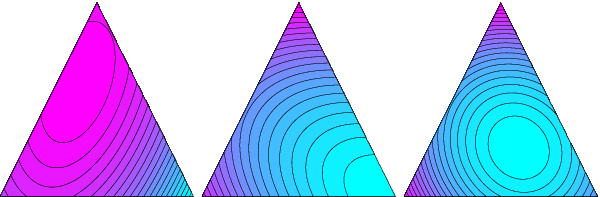

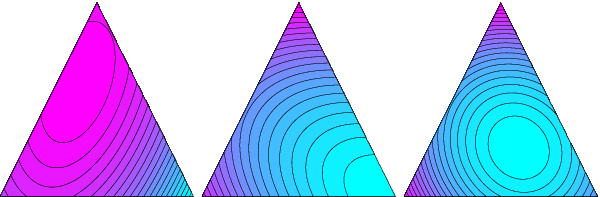

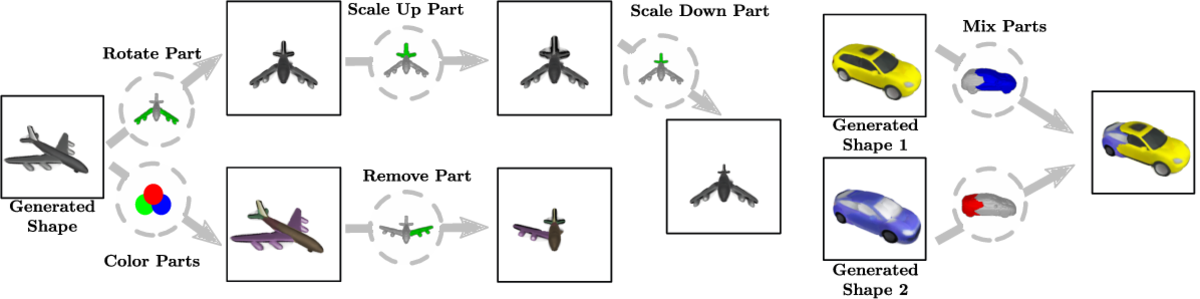

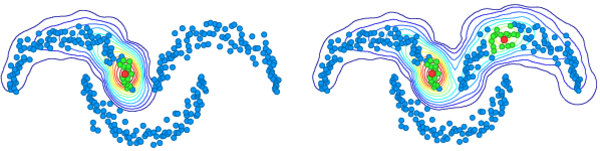

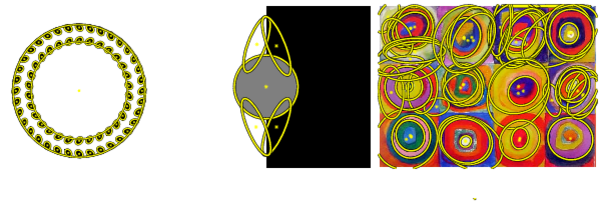

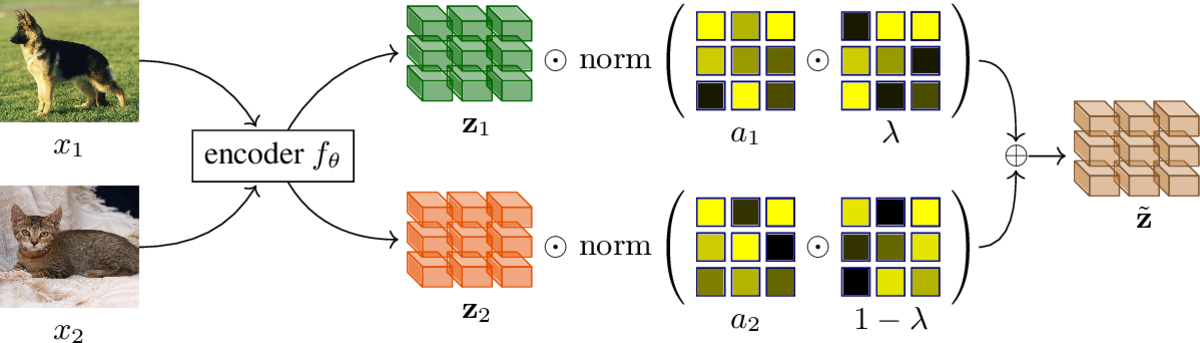

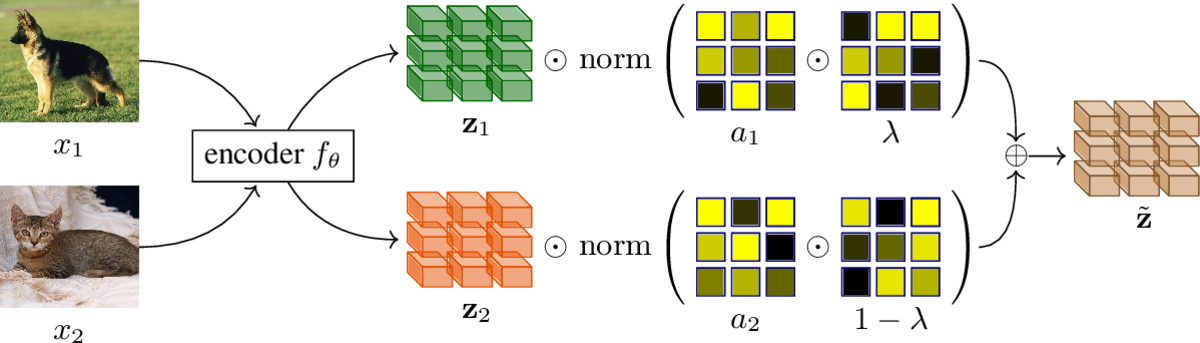

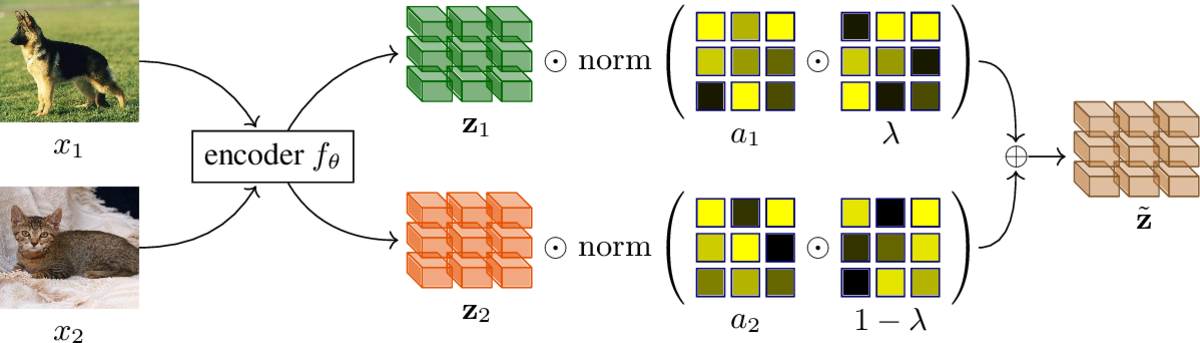

Mixup refers to interpolation-based data augmentation, originally motivated as a way to go beyond empirical risk minimization (ERM). Its extensions mostly focus on the definition of interpolation and the space (input or embedding) where it takes place, while the augmentation process itself is less studied. In most methods, the number of generated examples is limited to the mini-batch size and the number of examples being interpolated is limited to two (pairs), in the input space.

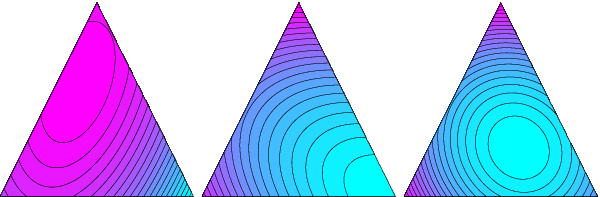

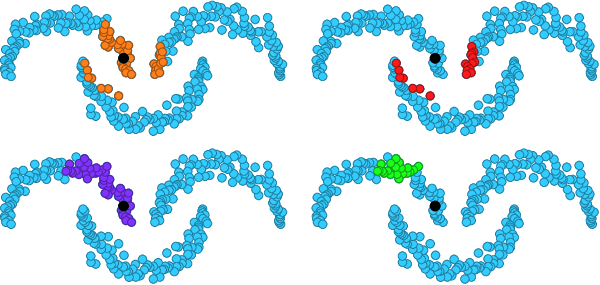

We make progress in this direction by introducing MultiMix, which generates an arbitrarily large number of interpolated examples beyond the mini-batch size, and interpolates the entire mini-batch in the embedding space. Effectively, we sample on the entire convex hull of the mini-batch rather than along linear segments between pairs of examples.

On sequence data we further extend to Dense MultiMix. We densely interpolate features and target labels at each spatial location and also apply the loss densely. To mitigate the lack of dense labels, we inherit labels from examples and weight interpolation factors by attention as a measure of confidence.

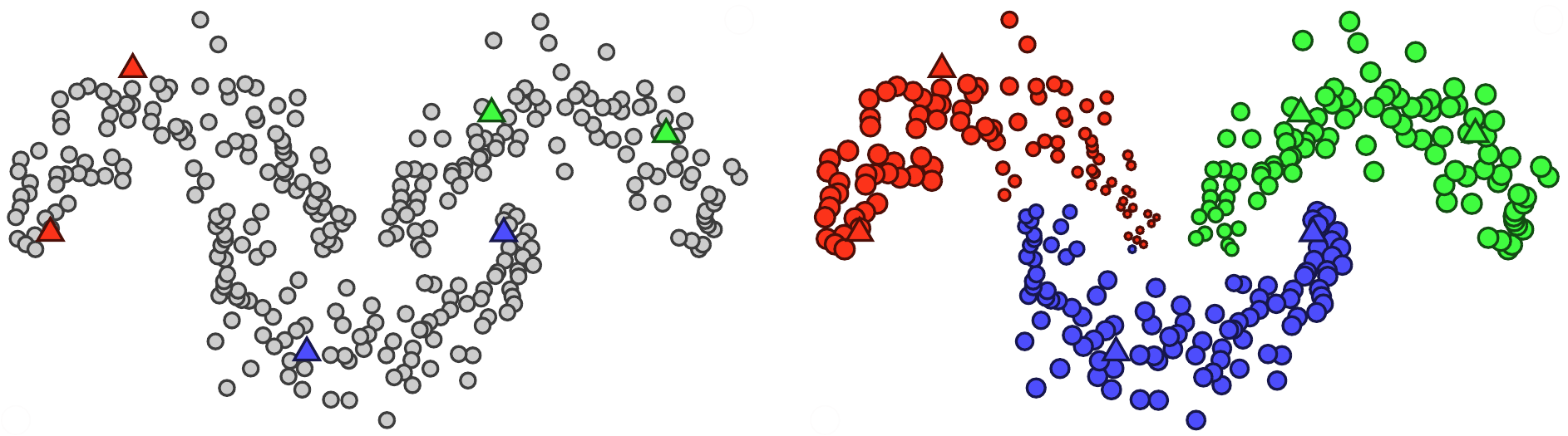

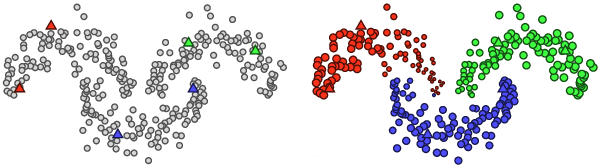

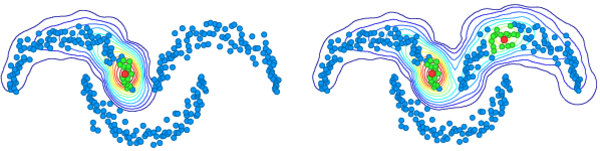

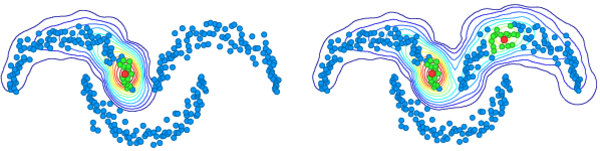

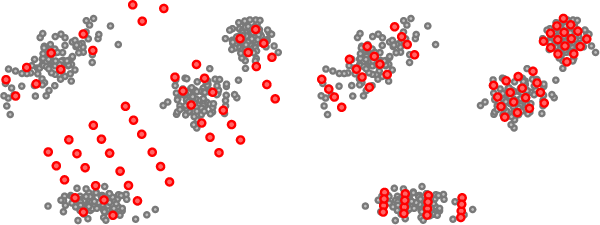

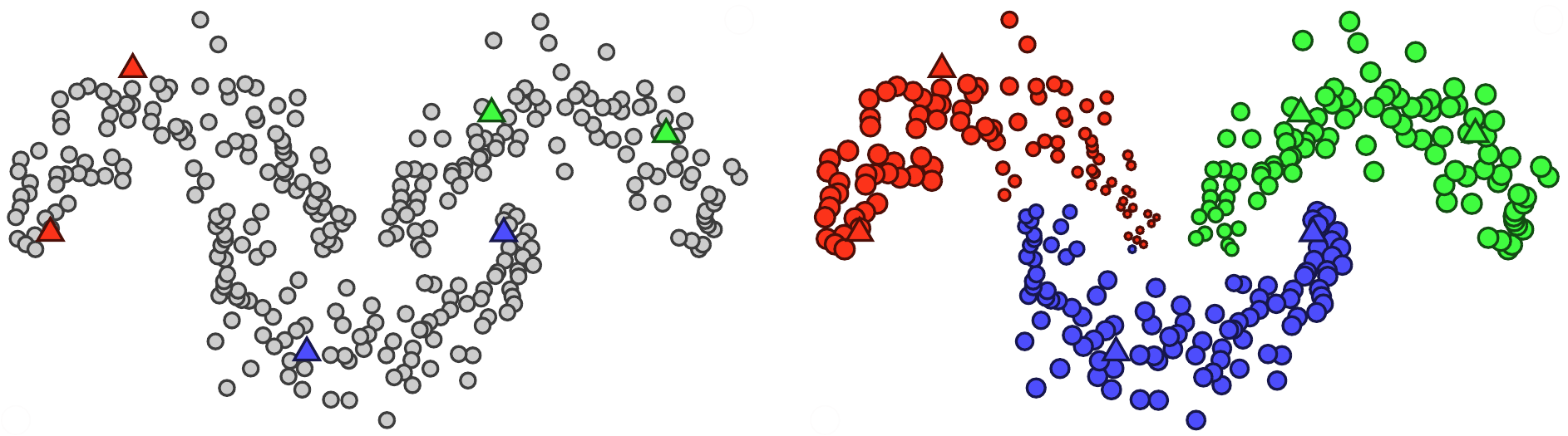

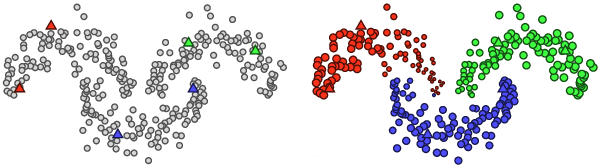

Overall, we increase the number of loss terms per mini-batch by orders of magnitude at little additional cost. This is only possible because of interpolating in the embedding space. We empirically show that our solutions yield significant improvement over state-of-the-art mixup methods on four different benchmarks, despite interpolation being only linear. By analyzing the embedding space, we show that the classes are more tightly clustered and uniformly spread over the embedding space, thereby explaining the improved behavior.

@conference{C131,

title = {Embedding Space Interpolation Beyond Mini-Batch, Beyond Pairs and Beyond Examples},

author = {Venkataramanan, Shashanka and Kijak, Ewa and Amsaleg, Laurent and Avrithis, Yannis},

booktitle = {Proceedings of Conference on Neural Information Processing Systems (NeurIPS)},

month = {12},

address = {New Orleans, LA, US},

year = {2023}

}

Mixup refers to interpolation-based data augmentation, originally motivated as a way to go beyond empirical risk minimization (ERM). Its extensions mostly focus on the definition of interpolation and the space (input or feature) where it takes place, while the augmentation process itself is less studied. In most methods, the number of generated examples is limited to the mini-batch size and the number of examples being interpolated is limited to two (pairs), in the input space.

We make progress in this direction by introducing MultiMix, which generates an arbitrarily large number of interpolated examples beyond the mini-batch size and interpolates the entire mini-batch in the embedding space. Effectively, we sample on the entire convex hull of the mini-batch rather than along linear segments between pairs of examples.

On sequence data, we further extend to Dense MultiMix. We densely interpolate features and target labels at each spatial location and also apply the loss densely. To mitigate the lack of dense labels, we inherit labels from examples and weight interpolation factors by attention as a measure of confidence.

Overall, we increase the number of loss terms per mini-batch by orders of magnitude at little additional cost. This is only possible because of interpolating in the embedding space. We empirically show that our solutions yield significant improvement over state-of-the-art mixup methods on four different benchmarks, despite interpolation being only linear. By analyzing the embedding space, we show that the classes are more tightly clustered and uniformly spread over the embedding space, thereby explaining the improved behavior.

@article{R46,

title = {Embedding Space Interpolation Beyond Mini-Batch, Beyond Pairs and Beyond Examples},

author = {Venkataramanan, Shashanka and Kijak, Ewa and Amsaleg, Laurent and Avrithis, Yannis},

journal = {arXiv preprint arXiv:2311.05538},

month = {11},

year = {2023}

}New Orleans, LA, US Jun 2022

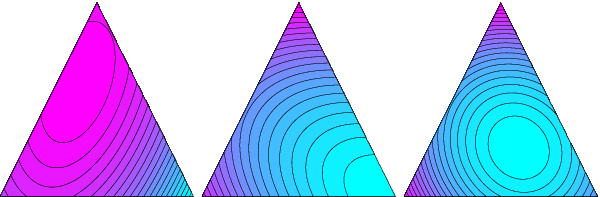

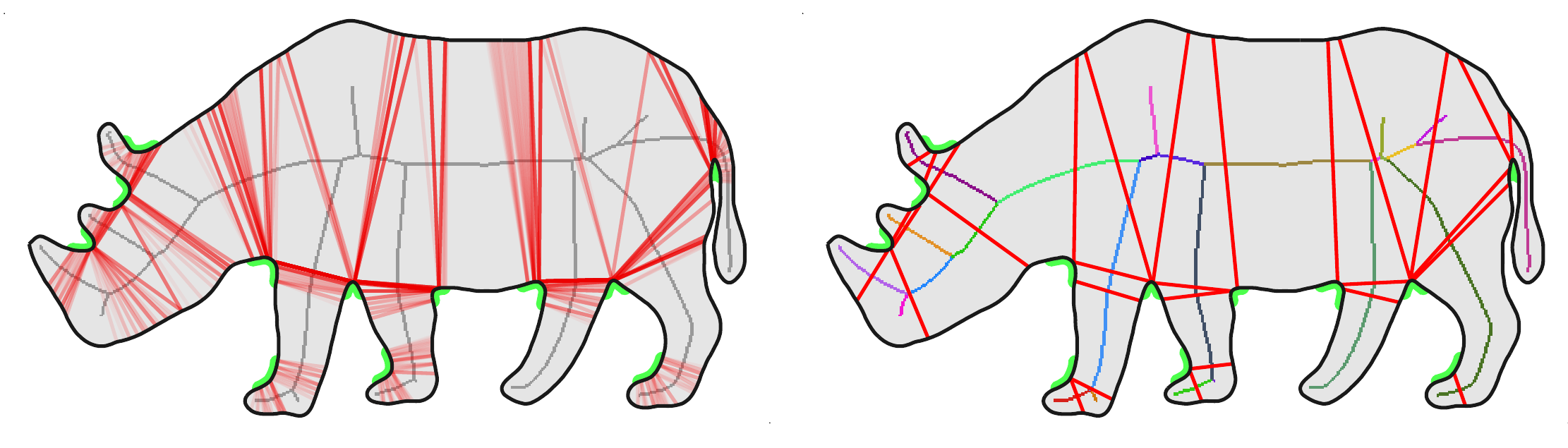

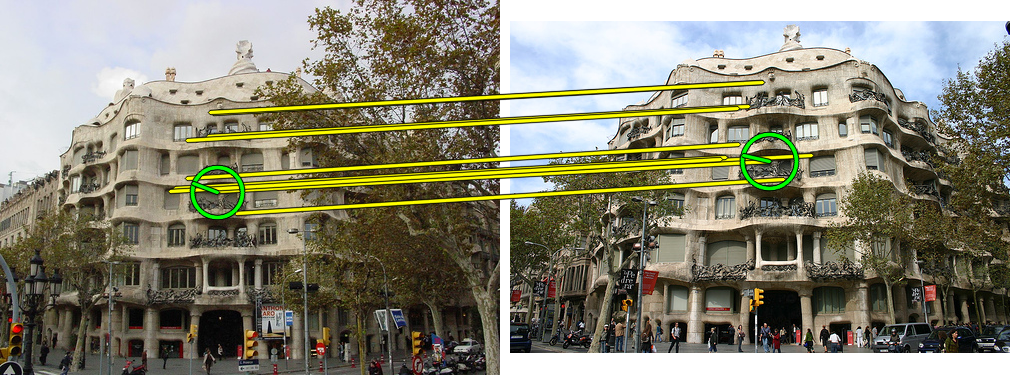

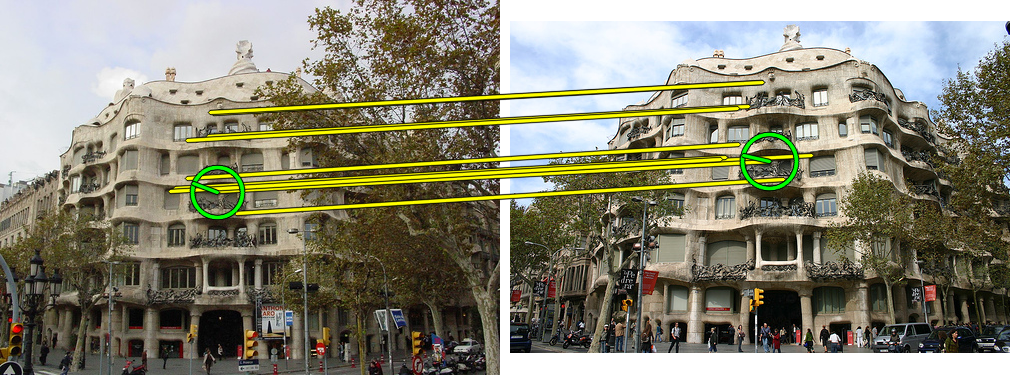

Mixup is a powerful data augmentation method that interpolates between two or more examples in the input or feature space and between the corresponding target labels. Many recent mixup methods focus on cutting and pasting two or more objects into one image, which is more about efficient processing than interpolation. However, how to best interpolate images is not well defined. In this sense, mixup has been connected to autoencoders, because often autoencoders "interpolate well", for instance generating an image that continuously deforms into another.

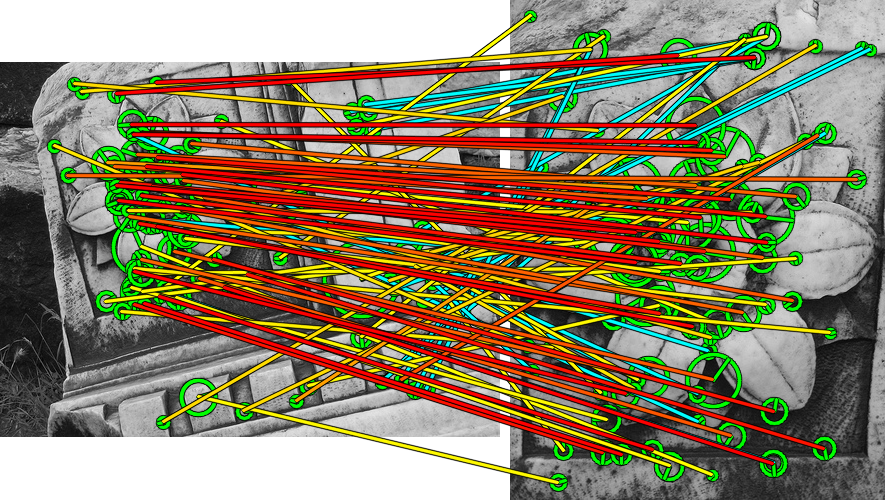

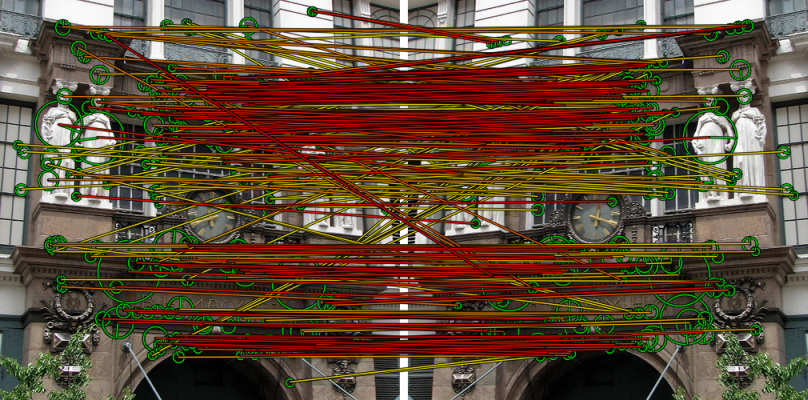

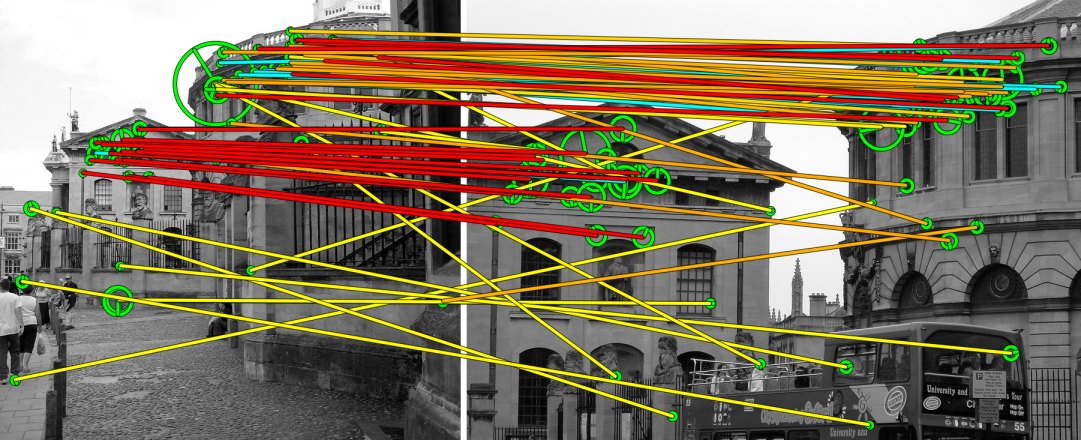

In this work, we revisit mixup from the interpolation perspective and introduce AlignMix, where we geometrically align two images in the feature space. The correspondences allow us to interpolate between two sets of features, while keeping the locations of one set. Interestingly, this gives rise to a situation where mixup retains mostly the geometry or pose of one image and the texture of the other, connecting it to style transfer. More than that, we show that an autoencoder can still improve representation learning under mixup, without the classifier ever seeing decoded images. AlignMix outperforms state-of-the-art mixup methods on five different benchmarks.

@conference{C124,

title = {{AlignMixup}: Improving representations by interpolating aligned features},

author = {Venkataramanan, Shashanka and Kijak, Ewa and Amsaleg, Laurent and Avrithis, Yannis},

booktitle = {Proceedings of IEEE Conference on Computer Vision and Pattern Recognition (CVPR)},

month = {6},

address = {New Orleans, LA, US},

year = {2022}

}Virtual Apr 2022

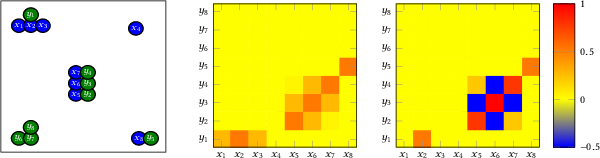

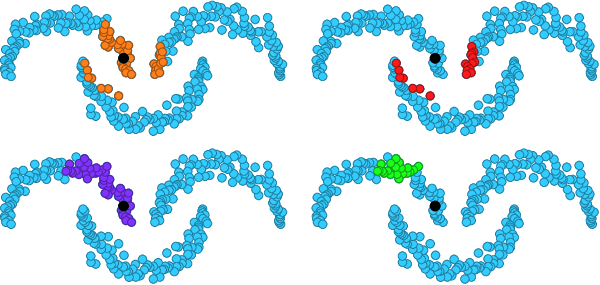

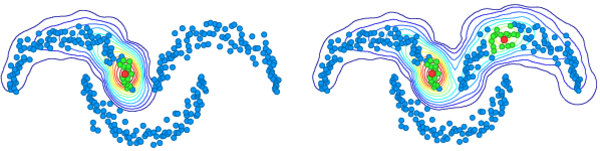

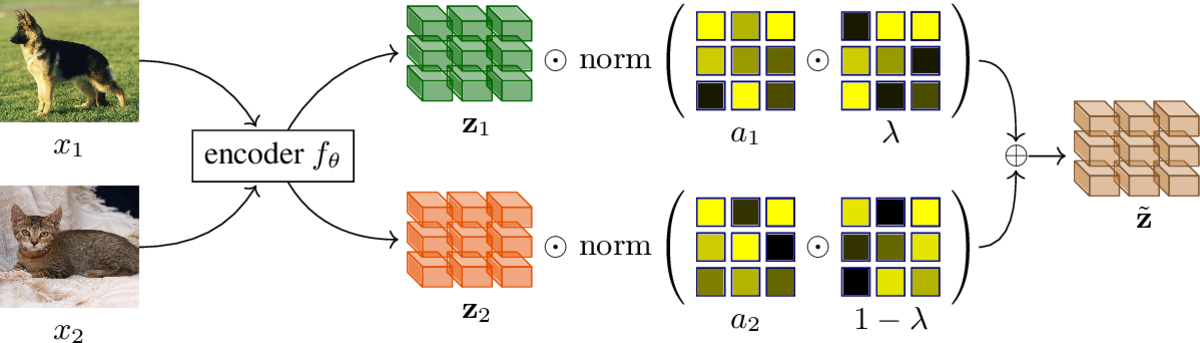

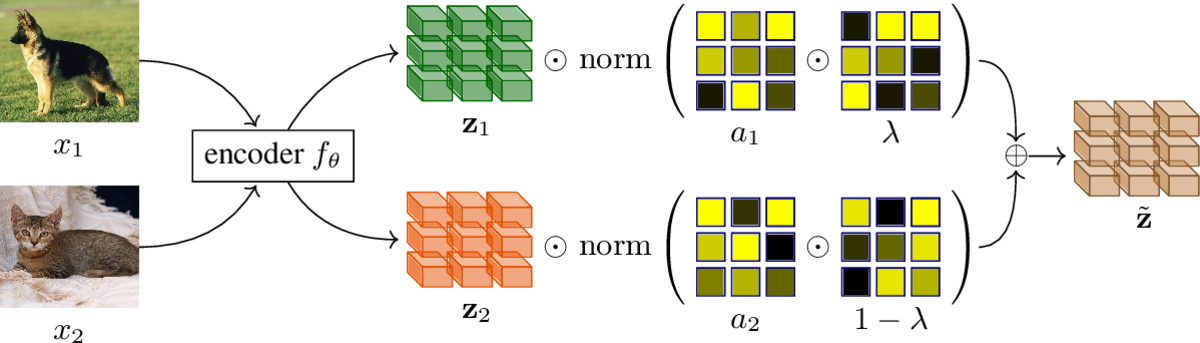

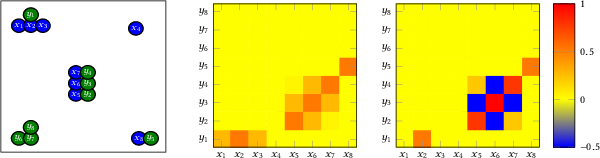

Metric learning involves learning a discriminative representation such that embeddings of similar classes are encouraged to be close, while embeddings of dissimilar classes are pushed far apart. State-of-the-art methods focus mostly on sophisticated loss functions or mining strategies. On the one hand, metric learning losses consider two or more examples at a time. On the other hand, modern data augmentation methods for classification consider two or more examples at a time. The combination of the two ideas is under-studied.

In this work, we aim to bridge this gap and improve representations using mixup, which is a powerful data augmentation approach interpolating two or more examples and corresponding target labels at a time. This task is challenging because, unlike classification, the loss functions used in metric learning are not additive over examples, so the idea of interpolating target labels is not straightforward. To the best of our knowledge, we are the first to investigate mixing both examples and target labels for deep metric learning. We develop a generalized formulation that encompasses existing metric learning loss functions and modify it to accommodate for mixup, introducing Metric Mix, or Metrix. We also introduce a new metric---utilization---to demonstrate that by mixing examples during training, we are exploring areas of the embedding space beyond the training classes, thereby improving representations. To validate the effect of improved representations, we show that mixing inputs, intermediate representations or embeddings along with target labels significantly outperforms state-of-the-art metric learning methods on four benchmark deep metric learning datasets.

@conference{C123,

title = {It Takes Two to Tango: Mixup for Deep Metric Learning},

author = {Venkataramanan, Shashanka and Psomas, Bill and Kijak, Ewa and Amsaleg, Laurent and Karantzalos, Konstantinos and Avrithis, Yannis},

booktitle = {Proceedings of International Conference on Learning Representations (ICLR)},

month = {4},

address = {Virtual},

year = {2022}

}

Mixup refers to interpolation-based data augmentation, originally motivated as a way to go beyond empirical risk minimization (ERM). Yet, its extensions focus on the definition of interpolation and the space where it takes place, while the augmentation itself is less studied: For a mini-batch of size $m$, most methods interpolate between $m$ pairs with a single scalar interpolation factor $\lambda$.

In this work, we make progress in this direction by introducing MultiMix, which interpolates an arbitrary number $n$ of tuples, each of length $m$, with one vector $\lambda$ per tuple. On sequence data, we further extend to dense interpolation and loss computation over all spatial positions. Overall, we increase the number of tuples per mini-batch by orders of magnitude at little additional cost. This is possible by interpolating at the very last layer before the classifier. Finally, to address inconsistencies due to linear target interpolation, we introduce a self-distillation approach to generate and interpolate synthetic targets.

We empirically show that our contributions result in significant improvement over state-of-the-art mixup methods on four benchmarks. By analyzing the embedding space, we observe that the classes are more tightly clustered and uniformly spread over the embedding space, thereby explaining the improved behavior.

@article{R37,

title = {Teach me how to Interpolate a Myriad of Embeddings},

author = {Venkataramanan, Shashanka and Kijak, Ewa and Amsaleg, Laurent and Avrithis, Yannis},

journal = {arXiv preprint arXiv:2206.14868},

month = {6},

year = {2022}

}Ed. by William Puech

pp. 41-75 Wiley, 2022

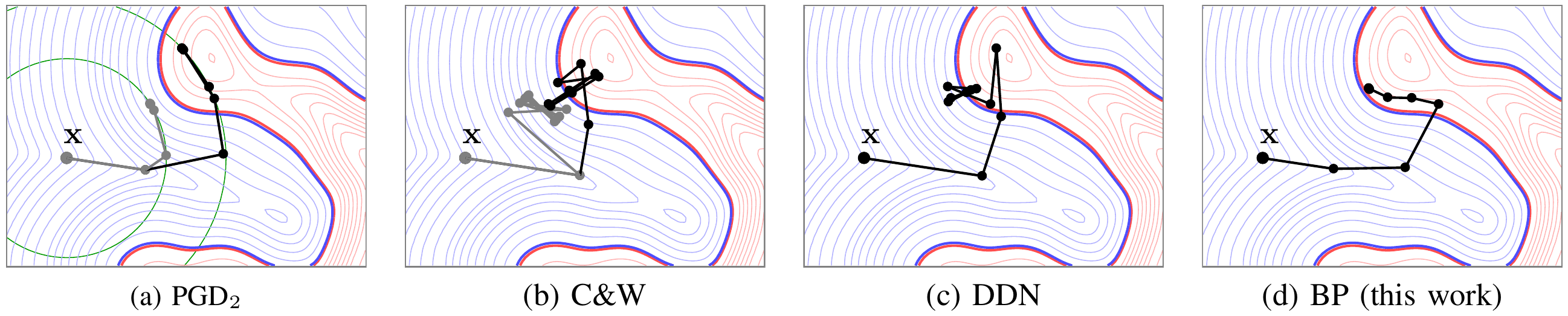

Machine learning using deep neural networks applied to image recognition works extremely well. However, it is possible to modify the images very slightly and intentionally, with modifications almost invisible to the eye, to deceive the classification system into misclassifying such content into the incorrect visual category. This chapter provides an overview of these intentional attacks, as well as the defense mechanisms used to counter them.

@incollection{B9,

title = {Deep Neural Network Attacks and Defense: The Case of Image Classification},

author = {Zhang, Hanwei and Furon, Teddy and Amsaleg, Laurent and Avrithis, Yannis},

publisher = {Wiley},

booktitle = {Multimedia Security 1: Authentication and Data Hiding},

editor = {William Puech},

pages = {41--75},

year = {2022}

}16:701-713 Sep 2021

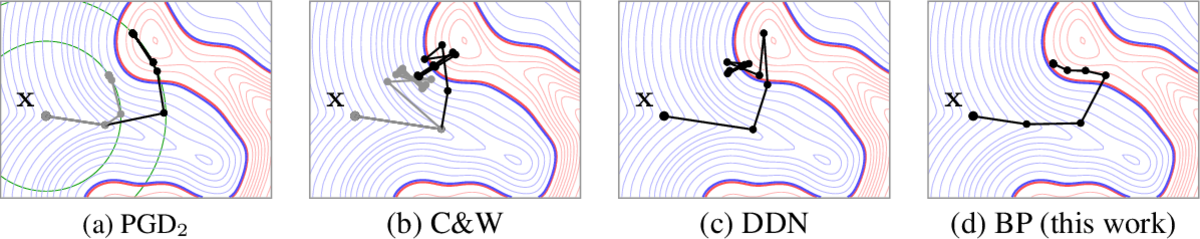

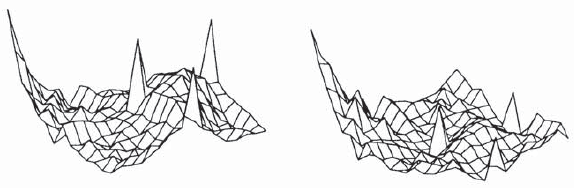

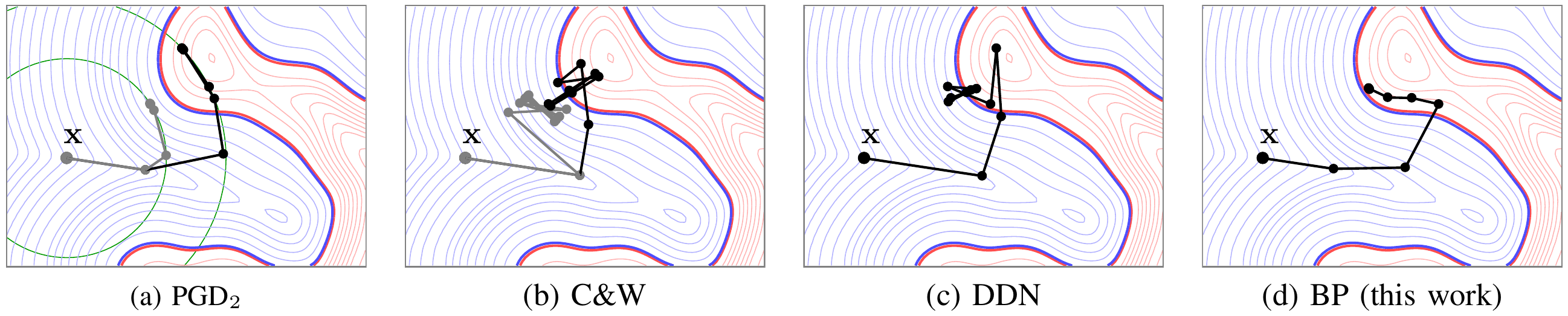

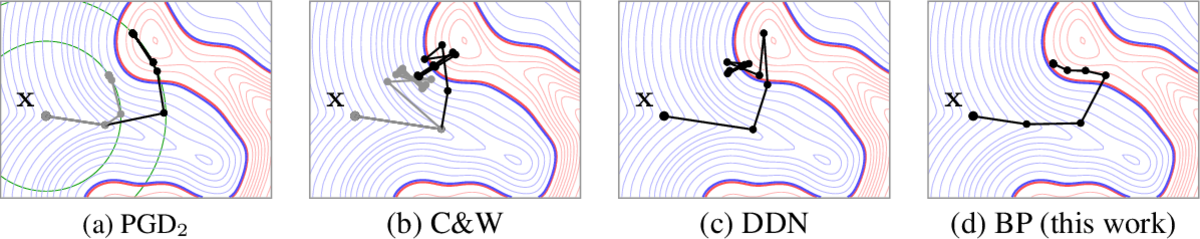

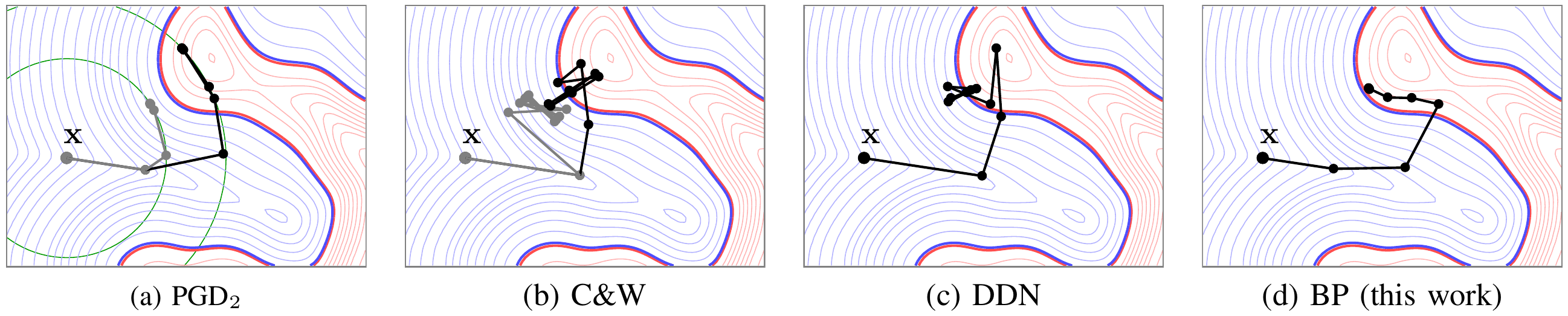

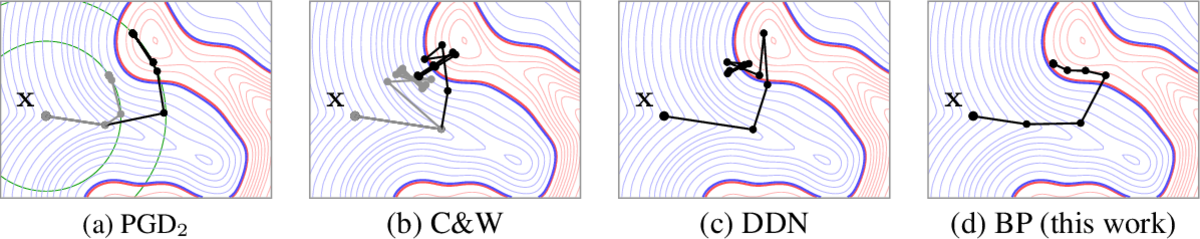

Adversarial examples of deep neural networks are receiving ever increasing attention because they help in understanding and reducing the sensitivity to their input. This is natural given the increasing applications of deep neural networks in our everyday lives. When white-box attacks are almost always successful, it is typically only the distortion of the perturbations that matters in their evaluation. In this work, we argue that speed is important as well, especially when considering that fast attacks are required by adversarial training. Given more time, iterative methods can always find better solutions. We investigate this speed-distortion trade-off in some depth and introduce a new attack called boundary projection (BP) that improves upon existing methods by a large margin. Our key idea is that the classification boundary is a manifold in the image space: we therefore quickly reach the boundary and then optimize distortion on this manifold.

@article{J30,

title = {Walking on the Edge: Fast, Low-Distortion Adversarial Examples},

author = {Zhang, Hanwei and Avrithis, Yannis and Furon, Teddy and Amsaleg, Laurent},

journal = {IEEE Transactions on Information Forensics and Security (TIFS)},

volume = {16},

pages = {701--713},

month = {9},

year = {2021}

}part of ACM Multimedia Conference

Chengdu, China Oct 2021

Deep Neural Networks (DNNs) are robust against intra-class variability of images, pose variations and random noise, but vulnerable to imperceptible adversarial perturbations that are well-crafted precisely to mislead. While random noise even of relatively large magnitude can hardly affect predictions, adversarial perturbations of very small magnitude can make a classifier fail completely.

To enhance robustness, we introduce a new adversarial defense called patch replacement, which transforms both the input images and their intermediate features at early layers to make adversarial perturbations behave similarly to random noise. We decompose images/features into small patches and quantize them according to a codebook learned from legitimate training images. This maintains the semantic information of legitimate images, while removing as much as possible the effect of adversarial perturbations.

Experiments show that patch replacement improves robustness against both white-box and gray-box attacks, compared with other transformation-based defenses. It has a low computational cost since it does not need training or fine-tuning the network. Importantly, in the white-box scenario, it increases the robustness, while other transformation-based defenses do not.

@conference{C120,

title = {Patch replacement: A transformation-based method to improve robustness against adversarial attacks},

author = {Zhang, Hanwei and Avrithis, Yannis and Furon, Teddy and Amsaleg, Laurent},

booktitle = {Proceedings of International Workshop on Trustworthy AI for Multimedia Computing (TAI), part of ACM Multimedia Conference (ACM-MM)},

month = {10},

address = {Chengdu, China},

year = {2021}

}

Metric learning involves learning a discriminative representation such that embeddings of similar classes are encouraged to be close, while embeddings of dissimilar classes are pushed far apart. State-of-the-art methods focus mostly on sophisticated loss functions or mining strategies. On the one hand, metric learning losses consider two or more examples at a time. On the other hand, modern data augmentation methods for classification consider two or more examples at a time. The combination of the two ideas is under-studied.

In this work, we aim to bridge this gap and improve representations using mixup, which is a powerful data augmentation approach interpolating two or more examples and corresponding target labels at a time. This task is challenging because, unlike classification, the loss functions used in metric learning are not additive over examples, so the idea of interpolating target labels is not straightforward. To the best of our knowledge, we are the first to investigate mixing examples and target labels for deep metric learning. We develop a generalized formulation that encompasses existing metric learning loss functions and modify it to accommodate for mixup, introducing Metric Mix, or Metrix. We show that mixing inputs, intermediate representations or embeddings along with target labels significantly improves representations and outperforms state-of-the-art metric learning methods on four benchmark datasets.

@article{R33,

title = {It Takes Two to Tango: Mixup for Deep Metric Learning},

author = {Venkataramanan, Shashanka and Psomas, Bill and Avrithis, Yannis and Kijak, Ewa and Amsaleg, Laurent and Karantzalos, Konstantinos},

journal = {arXiv preprint arXiv:2106.04990},

month = {6},

year = {2021}

}

Mixup is a powerful data augmentation method that interpolates between two or more examples in the input or feature space and between the corresponding target labels. Many recent mixup methods focus on cutting and pasting two or more objects into one image, which is more about efficient processing than interpolation. However, how to best interpolate images is not well defined. In this sense, mixup has been connected to autoencoders, because often autoencoders "interpolate well", for instance generating an image that continuously deforms into another.

In this work, we revisit mixup from the interpolation perspective and introduce AlignMix, where we geometrically align two images in the feature space. The correspondences allow us to interpolate between two sets of features, while keeping the locations of one set. Interestingly, this gives rise to a situation where mixup retains mostly the geometry or pose of one image and the texture of the other, connecting it to style transfer. More than that, we show that an autoencoder can still improve representation learning under mixup, without the classifier ever seeing decoded images. AlignMix outperforms state-of-the-art mixup methods on five different benchmarks.

@article{R31,

title = {{AlignMixup}: Improving representations by interpolating aligned features},

author = {Venkataramanan, Shashanka and Avrithis, Yannis and Kijak, Ewa and Amsaleg, Laurent},

journal = {arXiv preprint arXiv:2103.15375},

month = {3},

year = {2021}

}2020:15-26 Nov 2020

This paper investigates the visual quality of the adversarial examples. Recent papers propose to smooth the perturbations to get rid of high frequency artefacts. In this work, smoothing has a different meaning as it perceptually shapes the perturbation according to the visual content of the image to be attacked. The perturbation becomes locally smooth on the flat areas of the input image, but it may be noisy on its textured areas and sharp across its edges.

This operation relies on Laplacian smoothing, well-known in graph signal processing, which we integrate in the attack pipeline. We benchmark several attacks with and without smoothing under a white-box scenario and evaluate their transferability. Despite the additional constraint of smoothness, our attack has the same probability of success at lower distortion.

@article{J29,

title = {Smooth Adversarial Examples},

author = {Zhang, Hanwei and Avrithis, Yannis and Furon, Teddy and Amsaleg, Laurent},

journal = {EURASIP Journal on Information Security (JIS)},

volume = {2020},

pages = {15--26},

month = {11},

year = {2020}

}

Adversarial examples of deep neural networks are receiving ever increasing attention because they help in understanding and reducing the sensitivity to their input. This is natural given the increasing applications of deep neural networks in our everyday lives. When white-box attacks are almost always successful, it is typically only the distortion of the perturbations that matters in their evaluation.

In this work, we argue that speed is important as well, especially when considering that fast attacks are required by adversarial training. Given more time, iterative methods can always find better solutions. We investigate this speed-distortion trade-off in some depth and introduce a new attack called boundary projection (BP) that improves upon existing methods by a large margin. Our key idea is that the classification boundary is a manifold in the image space: we therefore quickly reach the boundary and then optimize distortion on this manifold.

@article{R25,

title = {Walking on the Edge: Fast, Low-Distortion Adversarial Examples},

author = {Zhang, Hanwei and Avrithis, Yannis and Furon, Teddy and Amsaleg, Laurent},

journal = {arXiv preprint arXiv:1912.02153},

month = {12},

year = {2019}

}

This paper investigates the visual quality of the adversarial examples. Recent papers propose to smooth the perturbations to get rid of high frequency artefacts. In this work, smoothing has a different meaning as it perceptually shapes the perturbation according to the visual content of the image to be attacked. The perturbation becomes locally smooth on the flat areas of the input image, but it may be noisy on its textured areas and sharp across its edges.

This operation relies on Laplacian smoothing, well-known in graph signal processing, which we integrate in the attack pipeline. We benchmark several attacks with and without smoothing under a white-box scenario and evaluate their transferability. Despite the additional constraint of smoothness, our attack has the same probability of success at lower distortion.

@article{R19,

title = {Smooth Adversarial Examples},

author = {Zhang, Hanwei and Avrithis, Yannis and Furon, Teddy and Amsaleg, Laurent},

journal = {arXiv preprint arXiv:1903.11862},

month = {3},

year = {2019}

}Anagnostopoulos, Evangelos

Santiago, Chile Dec 2015

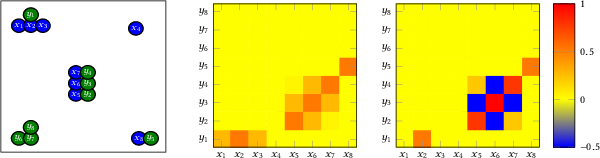

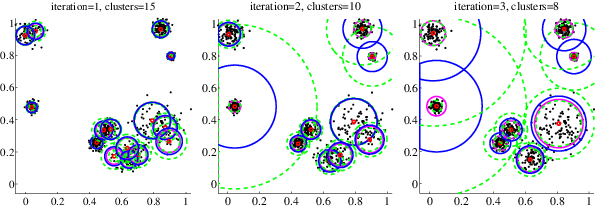

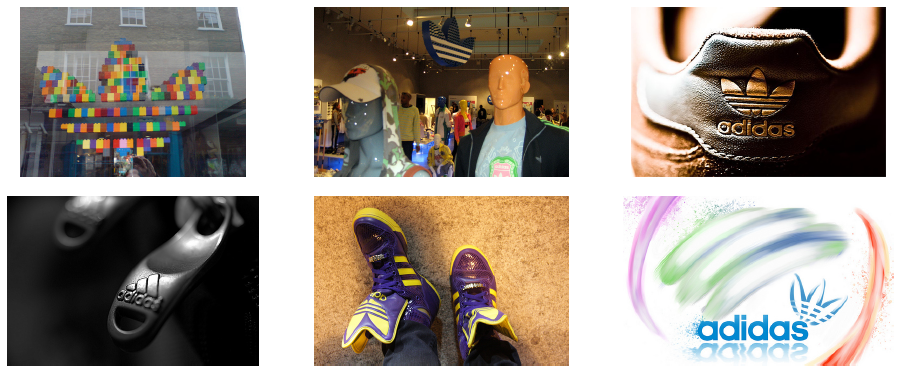

Large scale duplicate detection, clustering and mining of documents or images has been conventionally treated with seed detection via hashing, followed by seed growing heuristics using fast search. Principled clustering methods, especially kernelized and spectral ones, have higher complexity and are difficult to scale above millions. Under the assumption of documents or images embedded in Euclidean space, we revisit recent advances in approximate k-means variants, and borrow their best ingredients to introduce a new one, inverted-quantized k-means (IQ-means). Key underlying concepts are quantization of data points and multi-index based inverted search from centroids to cells. Its quantization is a form of hashing and analogous to seed detection, while its updates are analogous to seed growing, yet principled in the sense of distortion minimization. We further design a dynamic variant that is able to determine the number of clusters k in a single run at nearly zero additional cost. Combined with powerful deep learned representations, we achieve clustering of a 100 million image collection on a single machine in less than one hour.

@conference{C99,

title = {Web-scale image clustering revisited},

author = {Avrithis, Yannis and Kalantidis, Yannis and Anagnostopoulos, Evangelos and Emiris, Ioannis},

booktitle = {Proceedings of International Conference on Computer Vision (ICCV) (Oral)},

month = {12},

address = {Santiago, Chile},

year = {2015}

}Andreou, Georgios

Hamburg, Germany Sep 2003

Extraction of visual descriptor is a crucial problem for state-of-the-art visual information analysis. In this paper, we present a knowledge-based approach for detection of visual objects in video sequences. The propose approach models objects through their visual descriptors defined in MPEG7. It first extracts moving regions using an efficient active contours technique. It then computes visual descriptions of the moving regions including color features, shape features which are invariant to affine transformations, as well as motion features. The extracted features are matched to a-priori knowledge about the objects' descriptions,using appropriately defined matching functions. Results are presented which illustrate the theoretical developments

@conference{C26,

title = {Intelligent Visual Descriptor Extraction from Video Sequences},

author = {Tzouveli, Paraskevi and Andreou, Georgios and Tsechpenakis, Gabriel and Avrithis, Yannis and Kollias, Stefanos},

booktitle = {Proceedings of 1st International Workshop on Adaptive Multimedia Retrieval (AMR)},

month = {9},

address = {Hamburg, Germany},

year = {2003}

}Asano, Yuki M.

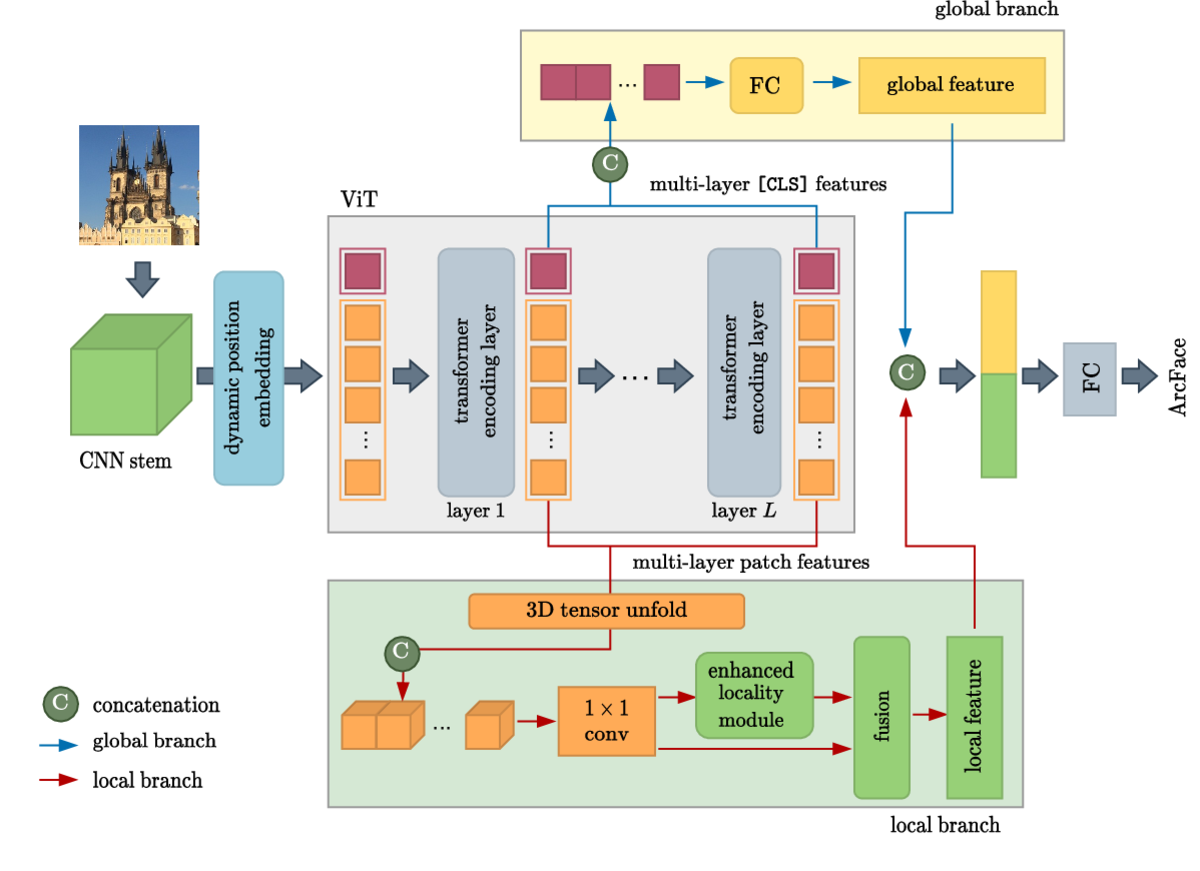

Vienna, Austria May 2024

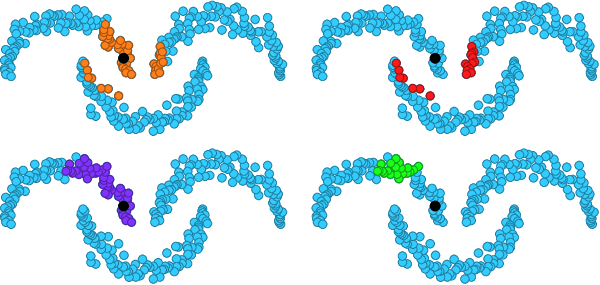

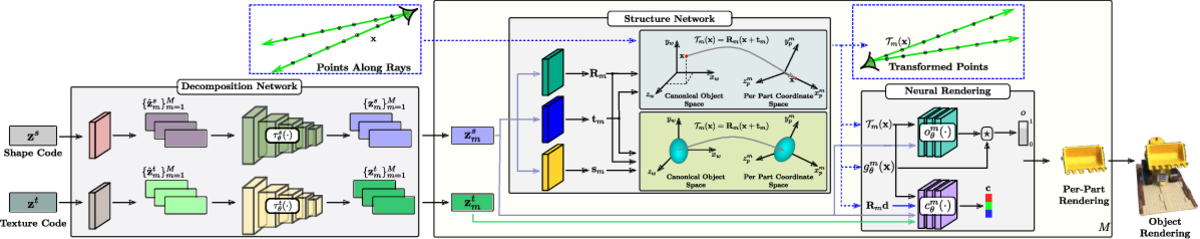

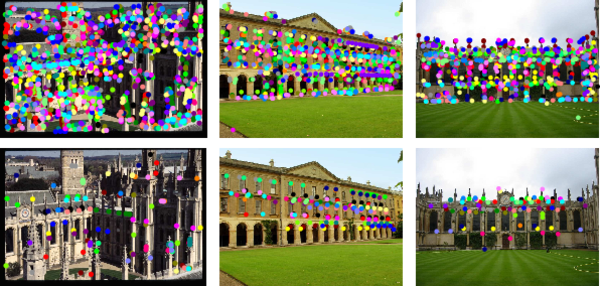

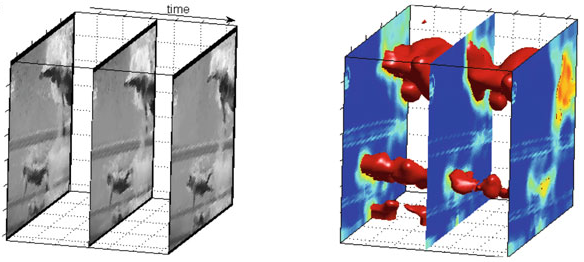

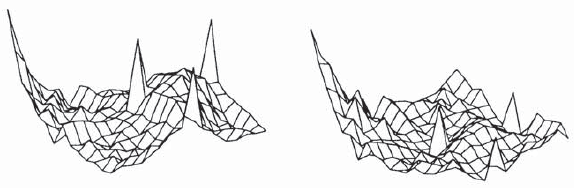

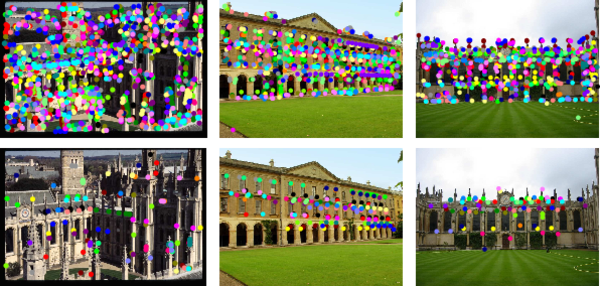

Self-supervised learning has unlocked the potential of scaling up pretraining to billions of images, since annotation is unnecessary. But are we making the best use of data? How more economical can we be? In this work, we attempt to answer this question by making two contributions. First, we investigate first-person videos and introduce a "Walking Tours" dataset. These videos are high-resolution, hours-long, captured in a single uninterrupted take, depicting a large number of objects and actions with natural scene transitions. They are unlabeled and uncurated, thus realistic for self-supervision and comparable with human learning.

Second, we introduce a novel self-supervised image pretraining method tailored for learning from continuous videos. Existing methods typically adapt image-based pretraining approaches to incorporate more frames. Instead, we advocate a "tracking to learn to recognize" approach. Our method called DoRA, leads to attention maps that DiscOver and tRAck objects over time in an end-to-end manner, using transformer cross-attention. We derive multiple views from the tracks and use them in a classical self-supervised distillation loss. Using our novel approach, a single Walking Tours video remarkably becomes a strong competitor to ImageNet for several image and video downstream tasks.

@conference{C134,

title = {Is ImageNet worth 1 video? Learning strong image encoders from 1 long unlabelled video},

author = {Venkataramanan, Shashanka and Rizve, Mamshad Nayeem and Carreira, Jo\~ao and Asano, Yuki M. and Avrithis, Yannis},

booktitle = {Proceedings of International Conference on Learning Representations (ICLR) (Oral). Outstanding Paper Honorable Mention},

month = {5},

address = {Vienna, Austria},

year = {2024}

}

Self-supervised learning has unlocked the potential of scaling up pretraining to billions of images, since annotation is unnecessary. But are we making the best use of data? How more economical can we be? In this work, we attempt to answer this question by making two contributions. First, we investigate first-person videos and introduce a "Walking Tours" dataset. These videos are high-resolution, hours-long, captured in a single uninterrupted take, depicting a large number of objects and actions with natural scene transitions. They are unlabeled and uncurated, thus realistic for self-supervision and comparable with human learning.

Second, we introduce a novel self-supervised image pretraining method tailored for learning from continuous videos. Existing methods typically adapt image-based pretraining approaches to incorporate more frames. Instead, we advocate a "tracking to learn to recognize" approach. Our method called DoRA, leads to attention maps that Discover and tRAck objects over time in an end-to-end manner, using transformer cross-attention. We derive multiple views from the tracks and use them in a classical self-supervised distillation loss. Using our novel approach, a single Walking Tours video remarkably becomes a strong competitor to ImageNet for several image and video downstream tasks.

@article{R44,

title = {Is ImageNet worth 1 video? Learning strong image encoders from 1 long unlabelled video},

author = {Venkataramanan, Shashanka and Rizve, Mamshad Nayeem and Carreira, Jo\~ao and Asano, Yuki M. and Avrithis, Yannis},

journal = {arXiv preprint arXiv:2310.08584},

month = {10},

year = {2023}

}Athanasiadis, Thanos

Sophia Antipolis, France Jan 2009

In this paper we propose a methodology for semantic indexing of images, based on techniques of image segmentation, classification and fuzzy reasoning. The proposed knowledge-assisted analysis architecture integrates algorithms applied on three overlapping levels of semantic information: i) no semantics, i.e. segmentation based on low-level features such as color and shape, ii) mid-level semantics, such as concurrent image segmentation and object detection, region-based classification and, iii) rich semantics, i.e. fuzzy reasoning for extraction of implicit knowledge. In that way, we extract semantic description of raw multimedia content and use it for indexing and retrieval purposes, backed up by a fuzzy knowledge repository. We conducted several experiments to evaluate each technique, as well as the whole methodology in overall and, results show the potential of our approach.

@conference{C81,

title = {Integrating Image Segmentation and Classification for Fuzzy Knowledge-based Multimedia Indexing},

author = {Athanasiadis, Thanos and Simou, Nikolaos and Papadopoulos, Georgios and Benmokhtar, Rachid and Chandramouli, Krishna and Tzouvaras, Vassilis and Mezaris, Vasileios and Phinikettos, Marios and Avrithis, Yannis and Kompatsiaris, Yiannis and Huet, Benoit and Izquierdo, Ebroul},

booktitle = {Proceedings of 15th International Multimedia Modeling Conference (MMM)},

month = {1},

pages = {263--274},

address = {Sophia Antipolis, France},

year = {2009}

}Ed. by R. Troncy, B. Huet, S. Schenk

pp. 163-181 Wiley, 2009

In this chapter a first attempt will be made to examine how the coupling of multimedia processing and knowledge representation techniques, presented separately in previous chapters, can improve analysis. No formal reasoning techniques will be introduced at this stage; our exploration of how multimedia analysis and knowledge can be combined will start by revisiting the image and video segmentation problem. Semantic segmentation, presented in the first section of this chapter, starts with an elementary segmentation and region classification and refines it using similarity measures and merging criteria defined at the semantic level. Our discussion will continue in the next sections of the chapter with knowledge-driven classification approaches, which exploit knowledge in the form of contextual information for refining elementary classification results obtained via machine learning. Two relevant approaches will be presented. The first one deals with visual context and treats it as interaction between global classification and local region labels. The second one deals with spatial context and formulates the exploitation of it as a global optimization problem.

@incollection{B7,

title = {Knowledge Driven Segmentation and Classification},

author = {Athanasiadis, Thanos and Mylonas, Phivos and Papadopoulos, Georgios and Mezaris, Vasileios and Avrithis, Yannis and Kompatsiaris, Ioannis and Strintzis, Michael G.},

publisher = {Wiley},

booktitle = {Multimedia Semantics: Metadata, Analysis and Interaction},

editor = {R. Troncy and B. Huet and S. Schenk},

pages = {163--181},

year = {2009}

}39(3):293-327 Sep 2008

In this paper we present a framework for unified, personalized access to heterogeneous multimedia content in distributed repositories. Focusing on semantic analysis of multimedia documents, metadata, user queries and user profiles, it contributes to the bridging of the gap between the semantic nature of user queries and raw multimedia documents. The proposed approach utilizes as input visual content analysis results, as well as analyzes and exploits associated textual annotation, in order to extract the underlying semantics, construct a semantic index and classify documents to topics, based on a unified knowledge and semantics representation model. It may then accept user queries, and, carrying out semantic interpretation and expansion, retrieve documents from the index and rank them according to user preferences, similarly to text retrieval. All processes are based on a novel semantic processing methodology, employing fuzzy algebra and principles of taxonomic knowledge representation. Part I of this work presented in this paper deals with data and knowledge models, manipulation of multimedia content annotations and semantic indexing, while Part II will continue on the use of the extracted semantic information for personalized retrieval.

@article{J15,

title = {Semantic Representation of Multimedia Content: Knowledge Representation and Semantic Indexing},

author = {Mylonas, Phivos and Athanasiadis, Thanos and Wallace, Manolis and Avrithis, Yannis and Kollias, Stefanos},

journal = {Multimedia Tools and Applications (MTAP)},

publisher = {Springer},

volume = {39},

number = {3},

month = {9},

pages = {293--327},

year = {2008}

}Cairns, Australia Oct 2008

In this paper, we propose a framework to extend semantic labeling of images to video shot sequences and achieve efficient and semantic-aware spatiotemporal video segmentation. This task faces two major challenges, namely the temporal variations within a video sequence which affect image segmentation and labeling, and the computational cost of region labeling. Guided by these limitations, we design a method where spatiotemporal segmentation and object labeling are coupled to achieve semantic annotation of video shots. An internal graph structure that describes both visual and semantic properties of image and video regions is adopted. The process of spatiotemporal semantic segmentation is subdivided in two stages: Firstly, the video shot is split into small block of frames. Spatiotemporal regions (volumes) are extracted and labeled individually within each block. Then, we iteratively merge consecutive blocks by a matching procedure which considers both semantic and visual properties. Results on real video sequences show the potential of our approach.

@conference{C75,

title = {Spatiotemporal Semantic Video Segmentation},

author = {Galmar, Eric and Athanasiadis, Thanos and Huet, Benoit and Avrithis, Yannis},

publisher = {IEEE},

booktitle = {Proceedings of 10th International Workshop on Multimedia Signal Processing (MMSP)},

month = {10},

address = {Cairns, Australia},

year = {2008}

}17(3):298-312 Mar 2007

In this paper we present a framework for simultaneous image segmentation and object labeling leading to automatic image annotation. Focusing on semantic analysis of images, it contributes to knowledge-assisted multimedia analysis and the bridging of the gap between its semantics and low level visual features. The proposed framework operates at semantic level using possible semantic labels, formally defined as fuzzy sets, to make decisions on handling image regions instead of visual features used traditionally. In order to stress its independence of a specific image segmentation approach we have modified two well known region growing algorithms, i.e. watershed and recursive shortest spanning tree, and compared them with their traditional counterparts. Additionally, a visual context representation and analysis approach is presented, blending global knowledge in interpreting each object locally. Contextual information is based on a novel semantic processing methodology, employing fuzzy algebra and ontological taxonomic knowledge representation. In this process, utilization of contextual knowledge re-adjusts semantic region growing labeling results appropriately, by means of fine-tuning the membership degrees of detected concepts. The performance of the overall methodology is demonstrated on a real-life still image dataset from two popular domains.

@article{J13,

title = {Semantic Image Segmentation and Object Labeling},

author = {Athanasiadis, Thanos and Mylonas, Phivos and Avrithis, Yannis and Kollias, Stefanos},

journal = {IEEE Transactions on Circuits and Systems for Video Technology (CSVT)},

volume = {17},

number = {3},

month = {3},

pages = {298--312},

year = {2007}

}36(1):34-52 Jan 2006

During the last few years numerous multimedia archives have made extensive use of digitized storage and annotation technologies. Still, the development of single points of access, providing common and uniform access to their data, despite the efforts and accomplishments of standardization organizations, has remained an open issue, as it involves the integration of various large scale heterogeneous and heterolingual systems. In this paper, we describe a mediator system that achieves architectural integration through an extended 3-tier architecture and content integration through semantic modeling. The described system has successfully integrated five multimedia archives, quite different in nature and content from each other, while also providing for easy and scalable inclusion of more archives in the future.

@article{J7,

title = {Integrating Multimedia Archives: The Architecture and the Content Layer},

author = {Wallace, Manolis and Athanasiadis, Thanos and Avrithis, Yannis and Delopoulos, Anastasios and Kollias, Stefanos},

journal = {IEEE Transactions on Systems, Man, and Cybernetics, Part A: Systems and Humans (SMC-A)},

volume = {36},

number = {1},

month = {1},

pages = {34--52},

year = {2006}

}Athens, Greece Dec 2006

In this paper, we propose the application of rule-based reasoning for knowledge assisted image segmentation and object detection. A region merging approach is proposed based on fuzzy labeling and not on visual descriptors, while reasoning is used in evaluation of dissimilarity between adjacent regions according to rules applied on local information.

@conference{C60,

title = {Rule-based Reasoning for Semantic Image Segmentation and Interpretation},

author = {Berka, Petr and Athanasiadis, Thanos and Avrithis, Yannis},

publisher = {CEUR-WS},

booktitle = {Poster \& Demo Proceedings of 1st International Conference on Semantics And digital Media Technology (SAMT)},

month = {12},

pages = {39--40},

address = {Athens, Greece},

year = {2006}

}Athens, Greece Dec 2006

In this paper we present a framework for simultaneous image segmentation and region labeling leading to automatic image annotation. The proposed framework operates at semantic level using possible semantic labels to make decisions on handling image regions instead of visual features used traditionally. In order to stress its independence of a specific image segmentation approach we applied our idea on two region growing algorithms, i.e. watershed and recursive shortest spanning tree. Additionally we exploit the notion of visual context by employing fuzzy algebra and ontological taxonomic knowledge representation, incorporating in this way global information and improving region interpretation. In this process, semantic region growing labeling results are being re-adjusted appropriately, utilizing contextual knowledge in the form of domain-specific semantic concepts and relations. The performance of the overall methodology is demonstrated on a real-life still image dataset from the popular domains of beach holidays and motorsports.

@conference{C59,

title = {A Context-based Region Labeling Approach for Semantic Image Segmentation},

author = {Athanasiadis, Thanos and Mylonas, Phivos and Avrithis, Yannis},

booktitle = {Proceedings of 1st International Conference on Semantics And digital Media Technology (SAMT)},

month = {12},

pages = {212--225},

address = {Athens, Greece},

year = {2006}

}Budapest, Hungary Sep 2006

Tackling the problems of automatic object recognition and/or scene classification with generic algorithms is not producing efficient and reliable results in the field of image analysis. Restricting the problem to a specific domain is a common approach to cope with this, still unresolved, issue. In this paper we propose a methodology to improve the results of image analysis, based on available contextual information derived from the popular sports domain. Our research efforts include application of a knowledge-assisted image analysis algorithm that utilizes an ontology infrastructure to handle knowledge and MPEG-7 visual descriptors for region labeling. A novel ontological representation for context is introduced, combining fuzziness with Semantic Web characteristics, such as RDF. Initial region labeling analysis results are then being re-adjusted appropriately according to a confidence value readjustment algorithm, by means of fine-tuning the degrees of confidence of each detected region label. In this process contextual knowledge in the form of domain-specific semantic concepts and relations is utilized. Performance of the overall methodology is demonstrated through its application on a real-life still image dataset derived from the tennis sub-domain.

@conference{C56,

title = {Image Analysis Using Domain Knowledge and Visual Context},

author = {Mylonas, Phivos and Athanasiadis, Thanos and Avrithis, Yannis},

booktitle = {Proceedings of 13th International Conference on Systems, Signals and Image Processing (IWSSIP)},

month = {9},

address = {Budapest, Hungary},

year = {2006}

}part of 15th World Wide Web Conference

Edinburgh, UK May 2006

In this position paper we examine the limitation of region growing segmentation techniques to extract semantically meaningful objects from an image. We propose a region growing algorithm that performs on a semantic level, driven by the knowledge of what each region represents at every iteration step of the merging process. This approach utilizes simultaneous segmentation and labeling of regions leading to automatic image annotation.

@conference{C50,

title = {A Semantic Region Growing Approach in Image Segmentation and Annotation},

author = {Athanasiadis, Thanos and Avrithis, Yannis and Kollias, Stefanos},

booktitle = {Proceedings of 1st International Workshop on Semantic Web Annotations for Multimedia (SWAMM), part of 15th World Wide Web Conference (WWW)},

month = {5},

address = {Edinburgh, UK},

year = {2006}

}Seoul, Korea Apr 2006

Generic algorithms for automatic object recognition and/or scene classification are unfortunately not producing reliable and robust results. A common approach to cope with this, still unresolved, issue is to restrict the problem at hand to a specific domain. In this paper we propose an algorithm to improve the results of image analysis, based on the contextual information we have, which relates the detected concepts to any given domain. Initial results produced by the image analysis module are domain-specific semantic concepts and are being re-adjusted appropriately by the suggested algorithm, by means of fine-tuning the degrees of confidence of each detected concept. The novelty of the presented work is twofold: i) the knowledge-assisted image analysis algorithm, that utilizes an ontology infrastructure to handle the knowledge and MPEG-7 visual descriptors for the region labeling and ii) the context-driven re-adjustment of the degrees of confidence of the detected labels.

@conference{C49,

title = {Improving Image Analysis using a Contextual Approach},

author = {Mylonas, Phivos and Athanasiadis, Thanos and Avrithis, Yannis},

booktitle = {Proceedings of 7th International Workshop on Image Analysis for Multimedia Interactive Services (WIAMIS)},

month = {4},

address = {Seoul, Korea},

year = {2006}

}part of 4th International Semantic Web Conference

Galway, Ireland Nov 2005

In this paper we discuss the use of knowledge for the automatic extraction of semantic metadata from multimedia content. For the representation of knowledge we extended and enriched current general-purpose ontologies to include low-level visual features. More specifically, we implemented a tool that links MPEG-7 visual descriptors to high-level, domain-specific concepts. For the exploitation of this knowledge infrastructure we developed an experimentation platform, that allows us to analyze multimedia content and automatically create the associated semantic metadata, as well as to test, validate and refine the ontologies built. We pursued a tight and functional integration of the knowledge base and the analysis modules putting them in a loop of constant interaction instead of being the one just a pre- or post-processing step of the other.

@conference{C47,

title = {Using a Multimedia Ontology Infrastructure for Semantic Annotation of Multimedia Content},

author = {Athanasiadis, Thanos and Tzouvaras, Vassilis and Petridis, Kosmas and Precioso, Frederic and Avrithis, Yannis and Kompatsiaris, Yiannis},

publisher = {CEUR-WS},

booktitle = {Proceedings of 5th International Workshop on Knowledge Markup and Semantic Annotation, (SemAnnot), part of 4th International Semantic Web Conference (ISWC)},

month = {11},

pages = {59--68},

address = {Galway, Ireland},

year = {2005}

}Koblenz, Germany Sep 2005

Knowledge representation and annotation of multimedia documents typically have been pursued in two different directions. Previous approaches have focused either on low level descriptors, such as dominant color, or on the content dimension and corresponding manual annotations, such as person or vehicle. In this paper, we present a knowledge infrastructure to bridge the gap between the two directions. Ontologies are being extended and enriched to include low-level audiovisual features and descriptors. Additionally, a tool for linking low-level MPEG-7 visual descriptions to ontologies and annotations has been developed. In this way, we construct ontologies that include prototypical instances of domain concepts together with a formal specification of the corresponding visual descriptors. Thus, we combine high-level domain concepts and low-level multimedia descriptions, enabling for new media content analysis.

@conference{C46,

title = {Combined Domain Specific and Multimedia Ontologies for Image Understanding},

author = {Petridis, Kosmas and Precioso, Frederic and Athanasiadis, Thanos and Avrithis, Yannis and Kompatsiaris, Yiannis},

booktitle = {Proceedings of 28th German Conference on Artificial Intelligence (KI)},

month = {9},

address = {Koblenz, Germany},

year = {2005}

}Montreux, Switzerland Apr 2005

Efficient video content management and exploitation requires extraction of the underlying semantics, which is a non-trivial task involving the association of low-level features with high-level concepts. In this paper, a knowledge-assisted approach for extracting semantic information of domain-specific video content is presented. Domain knowledge considers both low-level visual features (color, motion, shape) and spatial information (topological and directional relations). An initial segmentation algorithm generates a set of over-segmented atom-regions and a neural network is used to estimate the similarity distance between the extracted atom-region descriptors and the ones of the object models included in the domain ontology. A genetic algorithm is applied then in order to find the optimal interpretation according to the domain conceptualization. The proposed approach was tested on the Tennis and Formula One domains with promising results.

@conference{C36,

title = {Knowledge-Assisted Video Analysis Using A Genetic Algorithm},

author = {Voisine, Nicolas and Dasiopoulou, Stamatia and Mezaris, Vasileios and Spyrou, Evaggelos and Athanasiadis, Thanos and Kompatsiaris, Ioannis and Avrithis, Yannis and Strintzis, Michael G.},

booktitle = {Proceedings of 6th International Workshop on Image Analysis for Multimedia Interactive Services (WIAMIS)},

month = {4},

address = {Montreux, Switzerland},

year = {2005}

}Athens, Greece Nov 2004

In this paper, an integrated information system is presented that offers enhanced search and retrieval capabilities to users of hetero-lingual digital audiovisual (a/v) archives. This innovative system exploits the advances in handling a/v content and related metadata, as introduced by MPEG-4 and worked out by MPEG-7, to offer advanced services characterized by the tri-fold "semantic phrasing of the request (query)", "unified handling" and "personalized response". The proposed system is targeting the intelligent extraction of semantic information from a/v and text related data taking into account the nature of the queries that users my issue, and the context determined by user profiles.

@conference{C34,

title = {A mediator system for hetero-lingual audiovisual content},

author = {Wallace, Manolis and Athanasiadis, Thanos and Avrithis, Yannis and Stamou, Giorgos and Kollias, Stefanos},

booktitle = {Proceedings of International Conference on Multi-platform e-Publishing (MPEP)},

month = {11},

address = {Athens, Greece},

year = {2004}

}Dublin, Ireland Jul 2004

This paper presents FAETHON, a distributed information system that offers enhanced search and retrieval capabilities to users interacting with digital audiovisual (a/v) archives. Its novelty primarily originates in the unified intelligent access to heterogeneous a/v content. The paper emphasizes on the features that provide enhanced search and retrieval capabilities to users, as well as intelligent management of the a/v content by content creators / distributors. It describes the system's main components, the intelligent metadata creation package, the a/v search engine & portal, and the MPEG-7 compliant a/v archive interfaces. Finally, it provides ideas on the positioning of FAETHON in the market of a/v archives and video indexing and retrieval.

@conference{C29,

title = {Adding Semantics to Audiovisual Content: The {FAETHON} Project},

author = {Athanasiadis, Thanos and Avrithis, Yannis},

booktitle = {Proceedings of 3rd International Conference for Image and Video Retrieval (CIVR)},

month = {7},

pages = {665--673},

address = {Dublin, Ireland},

year = {2004}

}Dublin, Ireland Jul 2004

In this paper we discuss the use of knowledge for the analysis and semantic retrieval of video. We follow a fuzzy relational approach to knowledge representation, based on which we define and extract the context of either a multimedia document or a user query. During indexing, the context of the document is utilized for the detection of objects and for automatic thematic categorization. During retrieval, the context of the query is used to clarify the exact meaning of the query terms and to meaningfully guide the process of query expansion and index matching. Indexing and retrieval tools have been implemented to demonstrate the proposed techniques and results are presented using video from audiovisual archives.

@conference{C28,

title = {Knowledge Assisted Analysis and Categorization for Semantic Video Retrieval},

author = {Wallace, Manolis and Athanasiadis, Thanos and Avrithis, Yannis},

booktitle = {Proceedings of 3rd International Conference for Image and Video Retrieval (CIVR)},

month = {7},

pages = {555--563},

address = {Dublin, Ireland},

year = {2004}

}Ayache, Stephane

248:104101 Nov 2024

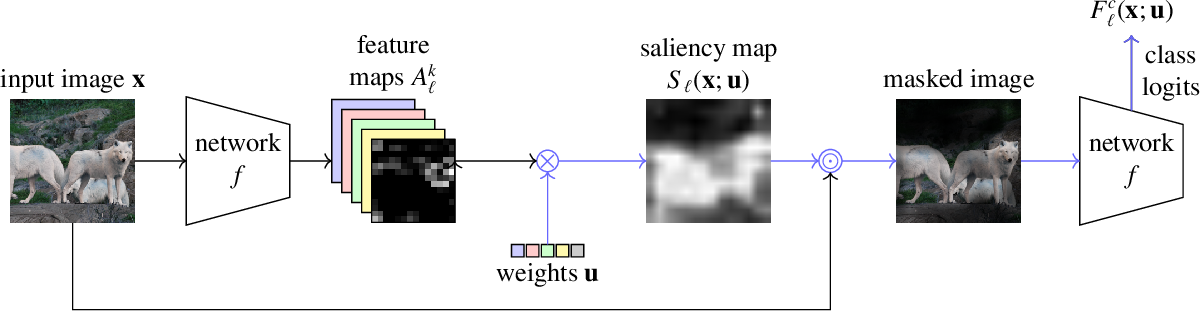

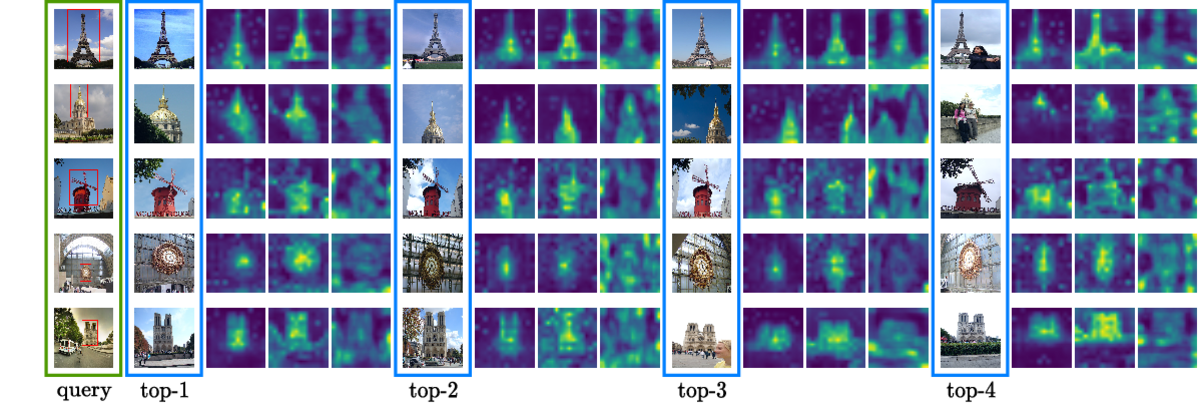

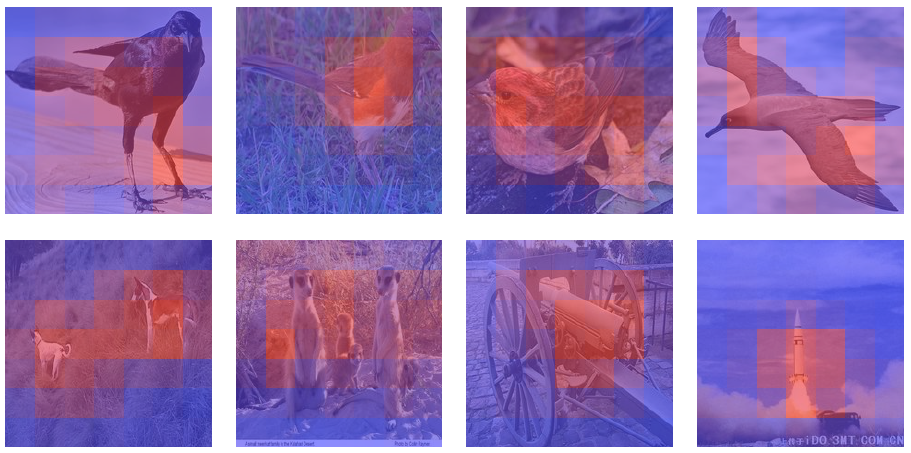

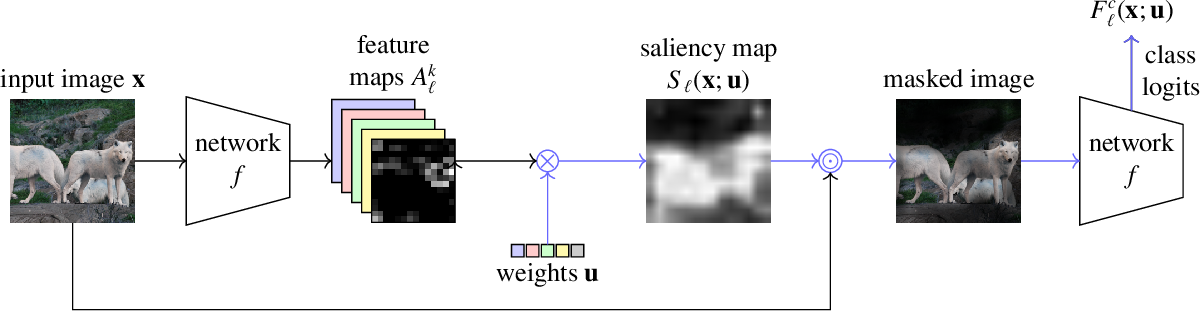

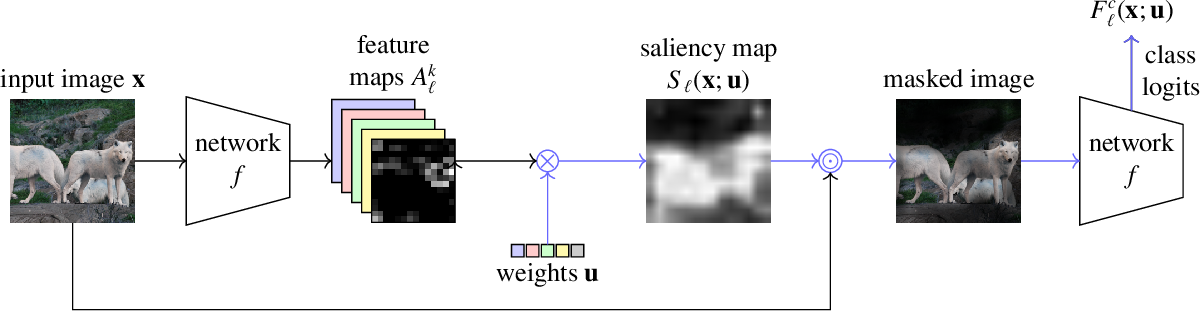

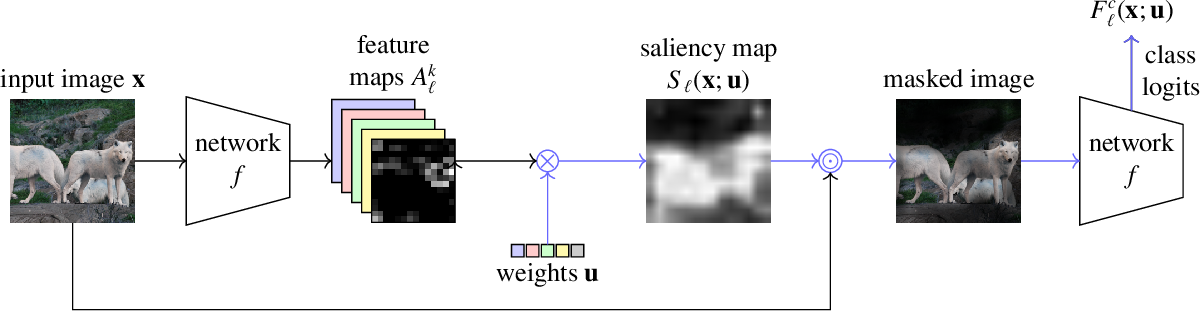

Methods based on class activation maps (CAM) provide a simple mechanism to interpret predictions of convolutional neural networks by using linear combinations of feature maps as saliency maps. By contrast, masking-based methods optimize a saliency map directly in the image space or learn it by training another network on additional data.

In this work we introduce Opti-CAM, combining ideas from CAM-based and masking-based approaches. Our saliency map is a linear combination of feature maps, where weights are optimized per image such that the logit of the masked image for a given class is maximized. We also fix a fundamental flaw in two of the most common evaluation metrics of attribution methods. On several datasets, Opti-CAM largely outperforms other CAM-based approaches according to the most relevant classification metrics. We provide empirical evidence supporting that localization and classifier interpretability are not necessarily aligned.

@article{J32,

title = {Opti-{CAM}: Optimizing saliency maps for interpretability},

author = {Zhang, Hanwei and Torres, Felipe and Sicre, Ronan and Avrithis, Yannis and Ayache, Stephane},

journal = {Computer Vision and Image Understanding (CVIU)},

volume = {248},

pages = {104101},

month = {11},

year = {2024}

}part of IEEE Conference on Computer Vision and Pattern Recognition

Seattle, WA, US Jun 2024

Explanations obtained from transformer-based architectures, in the form of raw attention, can be seen as a class agnostic saliency map. Additionally, attention-based pooling serves as a form of masking in feature space. Motivated by this observation, we design an attention-based pooling mechanism intended to replace global average pooling during inference. This mechanism, called Cross Attention Stream (CA-Stream), comprises a stream of cross attention blocks interacting with features at different network levels. CA-Stream enhances interpretability properties in existing image recognition models, while preserving their recognition properties.

@conference{C137,

title = {{CA}-Stream: Attention-based pooling for interpretable image recognition},

author = {Torres Figueroa, Felipe and Zhang, Hanwei and Sicre, Ronan and Ayache, Stephane and Avrithis, Yannis},

booktitle = {Proceedings of 3rd Workshop on Explainable AI for Computer Vision (XAI4CV), part of IEEE Conference on Computer Vision and Pattern Recognition (CVPR)},

month = {6},

address = {Seattle, WA, US},

year = {2024}

}Rome, Italy Feb 2024

This paper studies interpretability of convolutional networks by means of saliency maps. Most approaches based on Class Activation Maps (CAM) combine information from fully connected layers and gradient through variants of backpropagation. However, it is well understood that gradients are noisy and alternatives like guided backpropagation have been proposed to obtain better visualization at inference. In this work, we present a novel training approach to improve the quality of gradients for interpretability. In particular, we introduce a regularization loss such that the gradient with respect to the input image obtained by standard backpropagation is similar to the gradient obtained by guided backpropagation. We find that the resulting gradient is qualitatively less noisy and improves quantitatively the interpretability properties of different networks, using several interpretability methods.

@conference{C133,

title = {A Learning Paradigm for Interpretable Gradients},

author = {Torres Figueroa, Felipe and Zhang, Hanwei and Sicre, Ronan and Avrithis, Yannis and Ayache, Stephane},

booktitle = {Proceedings of International Conference on Computer Vision Theory and Applications (VISAPP) (Oral)},

month = {2},

address = {Rome, Italy},

year = {2024}

}

This paper studies interpretability of convolutional networks by means of saliency maps. Most approaches based on Class Activation Maps (CAM) combine information from fully connected layers and gradient through variants of backpropagation. However, it is well understood that gradients are noisy and alternatives like guided backpropagation have been proposed to obtain better visualization at inference. In this work, we present a novel training approach to improve the quality of gradients for interpretability. In particular, we introduce a regularization loss such that the gradient with respect to the input image obtained by standard backpropagation is similar to the gradient obtained by guided backpropagation. We find that the resulting gradient is qualitatively less noisy and improves quantitatively the interpretability properties of different networks, using several interpretability methods.

@article{R50,

title = {A Learning Paradigm for Interpretable Gradients},

author = {Torres Figueroa, Felipe and Zhang, Hanwei and Sicre, Ronan and Avrithis, Yannis and Ayache, Stephane},

journal = {arXiv preprint arXiv:2404.15024},

month = {4},

year = {2024}

}

Explanations obtained from transformer-based architectures in the form of raw attention, can be seen as a class-agnostic saliency map. Additionally, attention-based pooling serves as a form of masking the in feature space. Motivated by this observation, we design an attention-based pooling mechanism intended to replace Global Average Pooling (GAP) at inference. This mechanism, called Cross-Attention Stream (CA-Stream), comprises a stream of cross attention blocks interacting with features at different network depths. CA-Stream enhances interpretability in models, while preserving recognition performance.

@article{R49,

title = {{CA}-Stream: Attention-based pooling for interpretable image recognition},

author = {Torres Figueroa, Felipe and Zhang, Hanwei and Sicre, Ronan and Ayache, Stephane and Avrithis, Yannis},

journal = {arXiv preprint arXiv:2404.14996},

month = {4},

year = {2024}

}

Methods based on class activation maps (CAM) provide a simple mechanism to interpret predictions of convolutional neural networks by using linear combinations of feature maps as saliency maps. By contrast, masking-based methods optimize a saliency map directly in the image space or learn it by training another network on additional data.

In this work we introduce Opti-CAM, combining ideas from CAM-based and masking-based approaches. Our saliency map is a linear combination of feature maps, where weights are optimized per image such that the logit of the masked image for a given class is maximized. We also fix a fundamental flaw in two of the most common evaluation metrics of attribution methods. On several datasets, Opti-CAM largely outperforms other CAM-based approaches according to the most relevant classification metrics. We provide empirical evidence supporting that localization and classifier interpretability are not necessarily aligned.

@article{R39,

title = {Opti-{CAM}: Optimizing saliency maps for interpretability},

author = {Zhang, Hanwei and Torres, Felipe and Sicre, Ronan and Avrithis, Yannis and Ayache, Stephane},

journal = {arXiv preprint arXiv:2301.07002},

month = {1},

year = {2023}

}B

Benmokhtar, Rachid

Sophia Antipolis, France Jan 2009

In this paper we propose a methodology for semantic indexing of images, based on techniques of image segmentation, classification and fuzzy reasoning. The proposed knowledge-assisted analysis architecture integrates algorithms applied on three overlapping levels of semantic information: i) no semantics, i.e. segmentation based on low-level features such as color and shape, ii) mid-level semantics, such as concurrent image segmentation and object detection, region-based classification and, iii) rich semantics, i.e. fuzzy reasoning for extraction of implicit knowledge. In that way, we extract semantic description of raw multimedia content and use it for indexing and retrieval purposes, backed up by a fuzzy knowledge repository. We conducted several experiments to evaluate each technique, as well as the whole methodology in overall and, results show the potential of our approach.

@conference{C81,

title = {Integrating Image Segmentation and Classification for Fuzzy Knowledge-based Multimedia Indexing},

author = {Athanasiadis, Thanos and Simou, Nikolaos and Papadopoulos, Georgios and Benmokhtar, Rachid and Chandramouli, Krishna and Tzouvaras, Vassilis and Mezaris, Vasileios and Phinikettos, Marios and Avrithis, Yannis and Kompatsiaris, Yiannis and Huet, Benoit and Izquierdo, Ebroul},

booktitle = {Proceedings of 15th International Multimedia Modeling Conference (MMM)},

month = {1},

pages = {263--274},

address = {Sophia Antipolis, France},

year = {2009}

}Berka, Petr

Athens, Greece Dec 2006

In this paper, we propose the application of rule-based reasoning for knowledge assisted image segmentation and object detection. A region merging approach is proposed based on fuzzy labeling and not on visual descriptors, while reasoning is used in evaluation of dissimilarity between adjacent regions according to rules applied on local information.

@conference{C60,

title = {Rule-based Reasoning for Semantic Image Segmentation and Interpretation},

author = {Berka, Petr and Athanasiadis, Thanos and Avrithis, Yannis},

publisher = {CEUR-WS},

booktitle = {Poster \& Demo Proceedings of 1st International Conference on Semantics And digital Media Technology (SAMT)},

month = {12},

pages = {39--40},

address = {Athens, Greece},

year = {2006}

}Bloehdorn, Stephan

Special issue on Knowledge-Based Digital Media Processing

153(3):255-262 Jun 2006

Knowledge representation and annotation of multimedia documents typically have been pursued in two different directions. Previous approaches have focused either on low level descriptors, such as dominant color, or on the semantic content dimension and corresponding manual annotations, such as person or vehicle. In this paper, we present a knowledge infrastructure and a experimentation platform for semantic annotation to bridge the two directions. Ontologies are being extended and enriched to include low-level audiovisual features and descriptors. Additionally, we present a tool that allows for linking low-level MPEG-7 visual descriptions to ontologies and annotations. This way we construct ontologies that include prototypical instances of high-level domain concepts together with a formal specification of the corresponding visual descriptors. This infrastructure is exploited by a knowledge-assisted analysis framework that may handle problems like segmentation, tracking, feature extraction and matching in order to classify scenes, identify and label objects, thus automatically create the associated semantic metadata.

@article{J9,

title = {Knowledge Representation and Semantic Annotation of Multimedia Content},

author = {Petridis, Kosmas and Bloehdorn, Stephan and Saathoff, Carsten and Simou, Nikolaos and Dasiopoulou, Stamatia and Tzouvaras, Vassilis and Handschuh, Siegfried and Avrithis, Yannis and Kompatsiaris, Ioannis and Staab, Steffen},

journal = {IEE Proceedings on Vision, Image and Signal Processing (VISP) (Special Issue on Knowledge-Based Digital Media Processing)},

volume = {153},

number = {3},

month = {6},

pages = {255--262},

year = {2006}

}Heraklion, Greece May 2005

Annotations of multimedia documents typically have been pursued in two different directions. Either previous approaches have focused on low level descriptors, such as dominant color, or they have focused on the content dimension and corresponding annotations, such as person or vehicle. In this paper, we present a software environment to bridge between the two directions. M-OntoMat-Annotizer allows for linking low level MPEG-7 visual descriptions to conventional Semantic Web ontologies and annotations. We use M-OntoMat-Annotizer in order to construct ontologies that include prototypical instances of high-level domain concepts together with a formal specification of corresponding visual descriptors. Thus, we formalize the interrelationship of high- and low-level multimedia concept descriptions allowing for new kinds of multimedia content analysis and reasoning.

@conference{C38,

title = {Semantic Annotation of Images and Videos for Multimedia Analysis},

author = {Bloehdorn, Stephan and Petridis, Kosmas and Saathoff, Carsten and Simou, Nikolaos and Tzouvaras, Vassilis and Avrithis, Yannis and Handschuh, Siegfried and Kompatsiaris, Yiannis and Staab, Steffen and Strintzis, Michael G.},

booktitle = {Proceedings of 2nd European Semantic Web Conference (ESWC)},

month = {5},

address = {Heraklion, Greece},

year = {2005}

}London, U.K. Nov 2004

In this paper, a knowledge representation infrastructure for semantic multimedia content analysis and reasoning is presented. This is one of the major objectives of the aceMedia Integrated Project where ontologies are being extended and enriched to include low-level audiovisual features, descriptors and behavioural models in order to support automatic content annotation. More specifically, the developed infrastructure consists of the core ontology based on extensions of the DOLCE core ontology and the multimedia-specific infrastructure components. These are, the Visual Descriptors Ontology, which is based on an RDFS representation of the MPEG-7 Visual Descriptors and the Multimedia Structure Ontology, based on the MPEG-7 MDS. Furthermore, the developed Visual Descriptor Extraction tool is presented, which will support the initialization of domain ontologies with multimedia features.

@conference{C32,

title = {Knowledge Representation for Semantic Multimedia Content Analysis and Reasoning},

author = {Petridis, Kosmas and Kompatsiaris, Ioannis and Strintzis, Michael G. and Bloehdorn, Stephan and Handschuh, Siegfried and Staab, Steffen and Simou, Nikolaos and Tzouvaras, Vassilis and Avrithis, Yannis},

booktitle = {Proceedings of European Workshop on the Integration of Knowledge, Semantics and Digital Media Technology (EWIMT)},

month = {11},

address = {London, U.K.},

year = {2004}

}Budnik, Mateusz

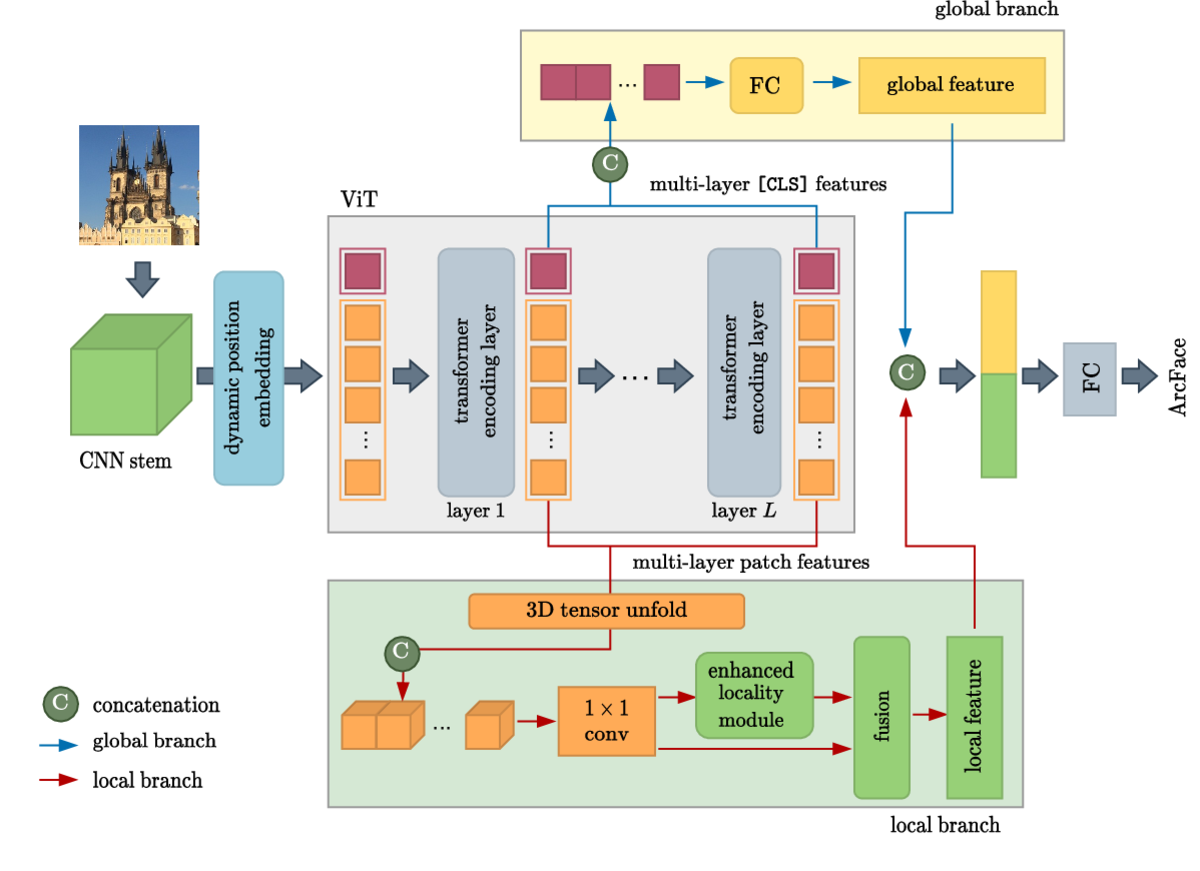

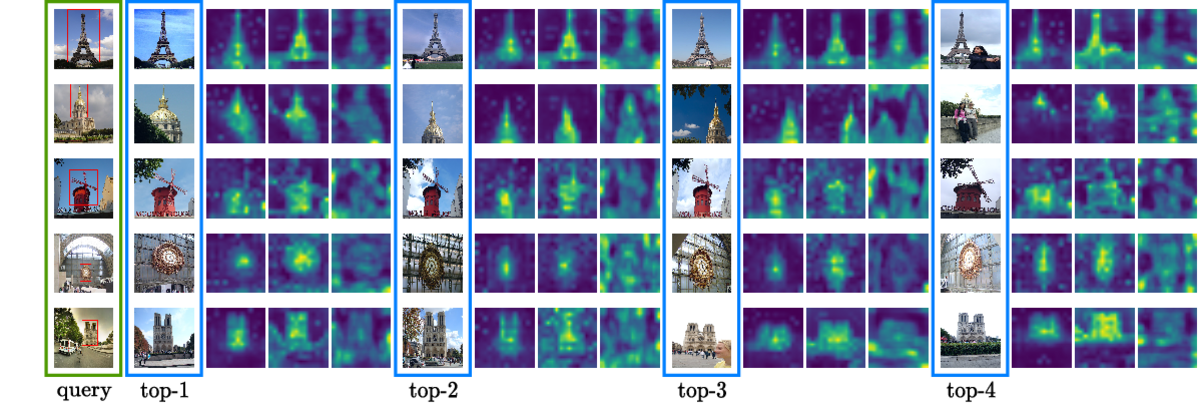

Virtual Jun 2021

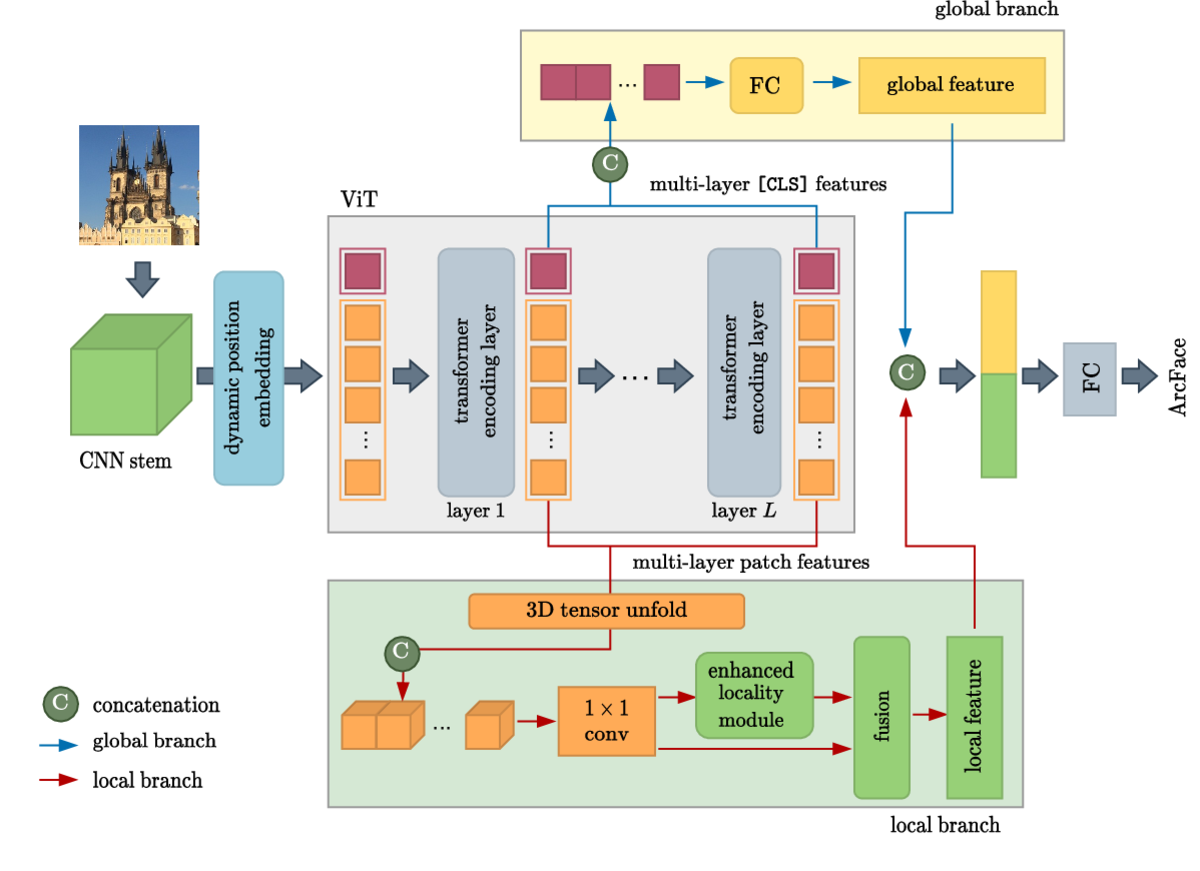

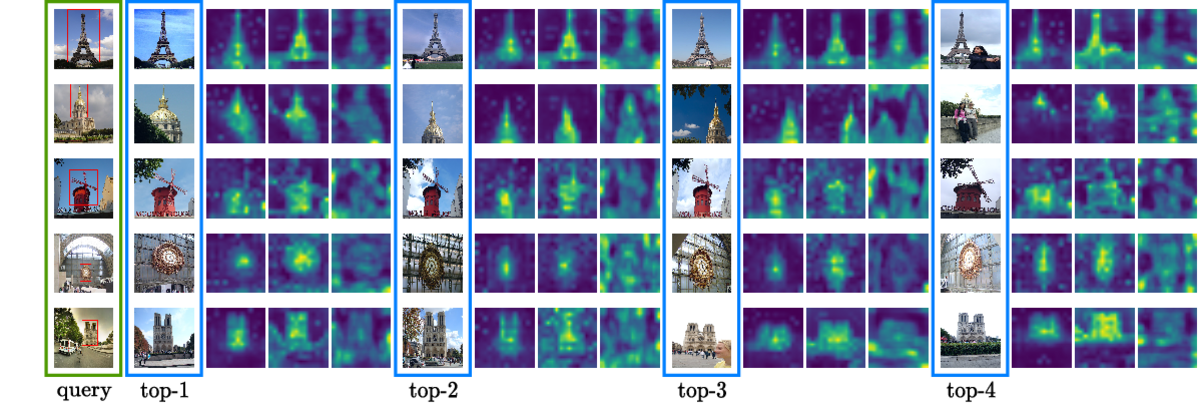

Knowledge transfer from large teacher models to smaller student models has recently been studied for metric learning, focusing on fine-grained classification. In this work, focusing on instance-level image retrieval, we study an asymmetric testing task, where the database is represented by the teacher and queries by the student. Inspired by this task, we introduce asymmetric metric learning, a novel paradigm of using asymmetric representations at training. This acts as a simple combination of knowledge transfer with the original metric learning task.

We systematically evaluate different teacher and student models, metric learning and knowledge transfer loss functions on the new asymmetric testing as well as the standard symmetric testing task, where database and queries are represented by the same model. We find that plain regression is surprisingly effective compared to more complex knowledge transfer mechanisms, working best in asymmetric testing. Interestingly, our asymmetric metric learning approach works best in symmetric testing, allowing the student to even outperform the teacher.

@conference{C117,

title = {Asymmetric metric learning for knowledge transfer},

author = {Budnik, Mateusz and Avrithis, Yannis},

booktitle = {Proceedings of IEEE Conference on Computer Vision and Pattern Recognition (CVPR)},

month = {6},

address = {Virtual},

year = {2021}

}Virtual Dec 2020

Active learning typically focuses on training a model on few labeled examples alone, while unlabeled ones are only used for acquisition. In this work we depart from this setting by using both labeled and unlabeled data during model training across active learning cycles. We do so by using unsupervised feature learning at the beginning of the active learning pipeline and semi-supervised learning at every active learning cycle, on all available data. The former has not been investigated before in active learning, while the study of latter in the context of deep learning is scarce and recent findings are not conclusive with respect to its benefit. Our idea is orthogonal to acquisition strategies by using more data, much like ensemble methods use more models. By systematically evaluating on a number of popular acquisition strategies and datasets, we find that the use of unlabeled data during model training brings a spectacular accuracy improvement in image classification, compared to the differences between acquisition strategies. We thus explore smaller label budgets, even one label per class.

@conference{C113,

title = {Rethinking deep active learning: Using unlabeled data at model training},

author = {Sim\'eoni, Oriane and Budnik, Mateusz and Avrithis, Yannis and Gravier, Guillaume},

booktitle = {Proceedings of International Conference on Pattern Recognition (ICPR)},

month = {12},

address = {Virtual},

year = {2020}

}

Knowledge transfer from large teacher models to smaller student models has recently been studied for metric learning, focusing on fine-grained classification. In this work, focusing on instance-level image retrieval, we study an asymmetric testing task, where the database is represented by the teacher and queries by the student. Inspired by this task, we introduce asymmetric metric learning, a novel paradigm of using asymmetric representations at training. This acts as a simple combination of knowledge transfer with the original metric learning task.

We systematically evaluate different teacher and student models, metric learning and knowledge transfer loss functions on the new asymmetric testing as well as the standard symmetric testing task, where database and queries are represented by the same model. We find that plain regression is surprisingly effective compared to more complex knowledge transfer mechanisms, working best in asymmetric testing. Interestingly, our asymmetric metric learning approach works best in symmetric testing, allowing the student to even outperform the teacher.

@article{R27,

title = {Asymmetric metric learning for knowledge transfer},

author = {Budnik, Mateusz and Avrithis, Yannis},

journal = {arXiv preprint arXiv:2006.16331},

month = {6},

year = {2020}

}

Active learning typically focuses on training a model on few labeled examples alone, while unlabeled ones are only used for acquisition. In this work we depart from this setting by using both labeled and unlabeled data during model training across active learning cycles. We do so by using unsupervised feature learning at the beginning of the active learning pipeline and semi-supervised learning at every active learning cycle, on all available data. The former has not been investigated before in active learning, while the study of latter in the context of deep learning is scarce and recent findings are not conclusive with respect to its benefit. Our idea is orthogonal to acquisition strategies by using more data, much like ensemble methods use more models. By systematically evaluating on a number of popular acquisition strategies and datasets, we find that the use of unlabeled data during model training brings a spectacular accuracy improvement in image classification, compared to the differences between acquisition strategies. We thus explore smaller label budgets, even one label per class.

@article{R23,

title = {Rethinking deep active learning: Using unlabeled data at model training},

author = {Sim\'eoni, Oriane and Budnik, Mateusz and Avrithis, Yannis and Gravier, Guillaume},

journal = {arXiv preprint arXiv:1911.08177},

month = {11},

year = {2019}

}Buitelaar, Paul

Vol. 4816 Dec 2007

Springer ISBN 978-3-540-77033-6

This book constitutes the refereed proceedings of the Second International Conference on Semantics and Digital Media Technologies, SAMT 2007, held in Genoa, Italy, in December 2007. The 16 revised full papers, 10 revised short papers and 10 poster papers presented together with three awarded PhD papers were carefully reviewed and selected from 55 submissions. The conference brings together forums, projects, institutions and individuals investigating the integration of knowledge, semantics and low-level multimedia processing, including new emerging media and application areas. The papers are organized in topical sections on knowledge based content processing, semantic multimedia annotation, domain-restricted generation of semantic metadata from multimodal sources, classification and annotation of multidimensional content, content adaptation, MX: the IEEE standard for interactive music, as well as poster papers and K-space awarded PhD papers.

@book{V2,

title = {Semantic Multimedia},

editor = {Falcidieno, Bianca and Spagnuolo, Michela and Avrithis, Yannis and Kompatsiaris, Ioannis and Buitelaar, Paul},

publisher = {Springer},

series = {Lecture Notes in Computer Science (LNCS)},

volume = {4816},

month = {12},

isbn = {978-3-540-77033-6},

year = {2007}

}Bursuc, Andrei

Tel Aviv, Isreal Oct 2022

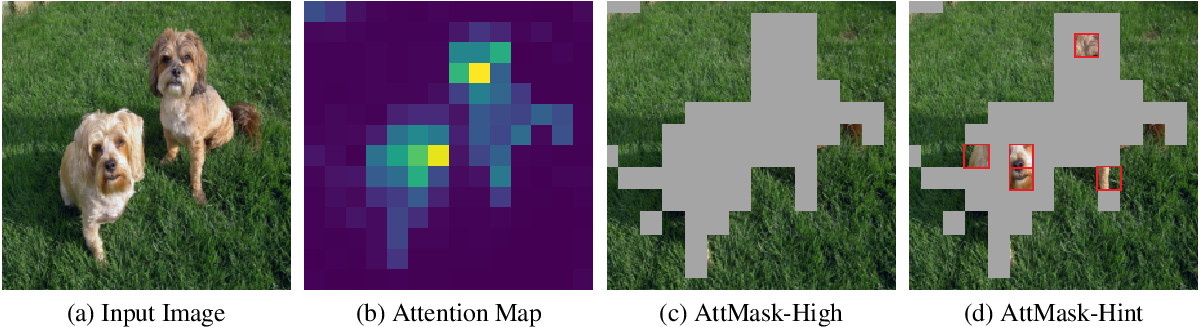

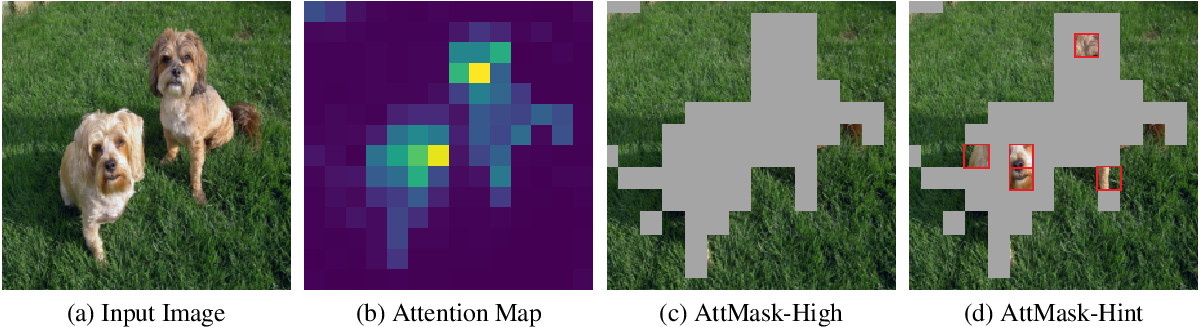

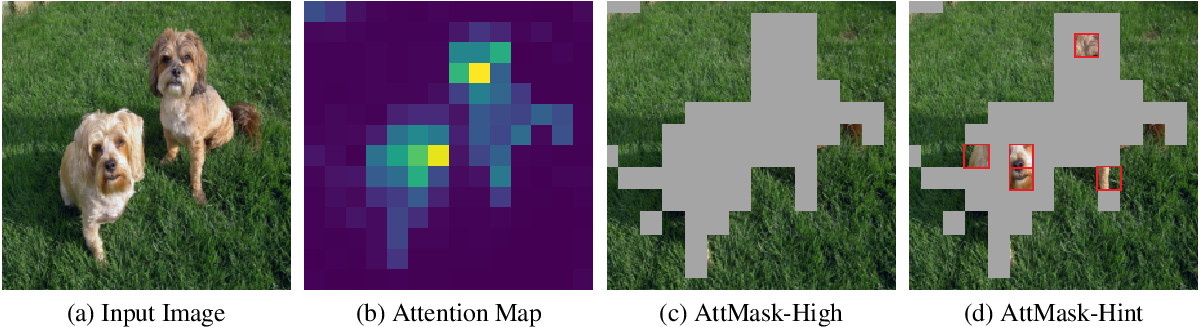

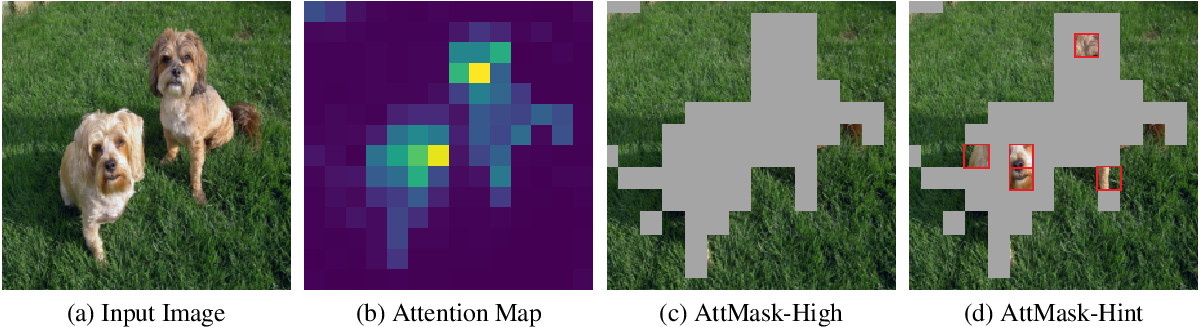

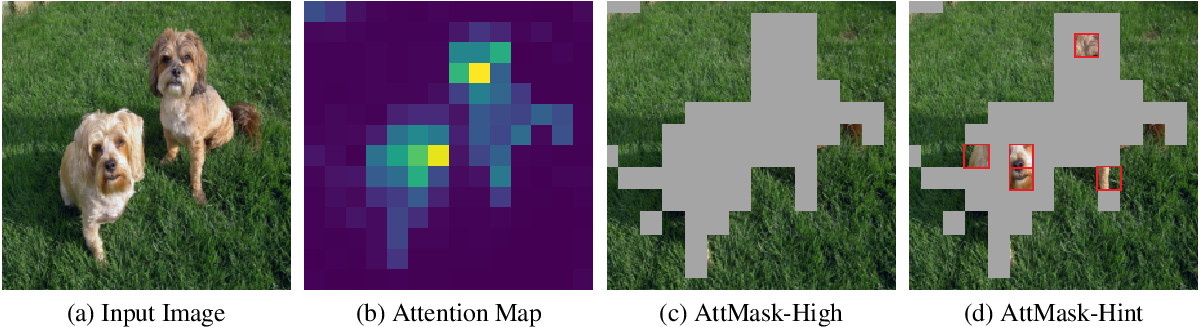

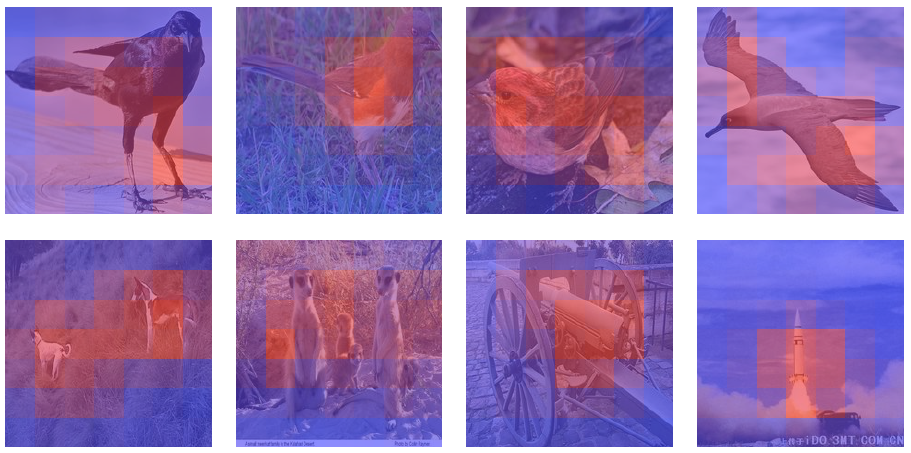

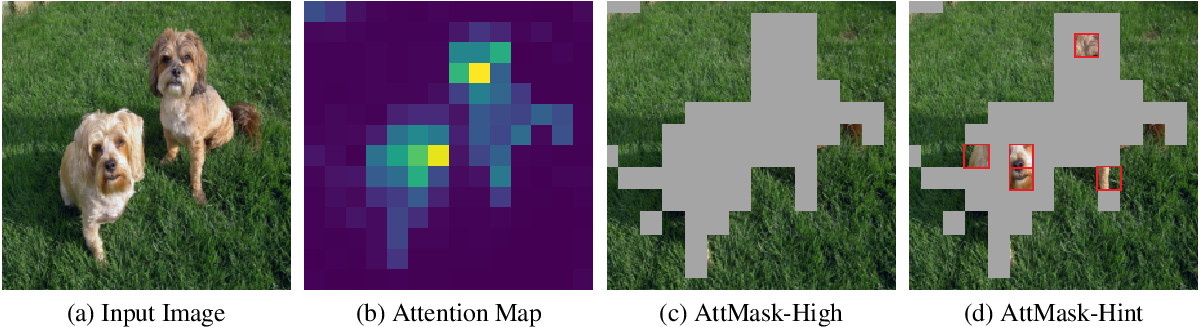

Transformers and masked language modeling are quickly being adopted and explored in computer vision as vision transformers and masked image modeling (MIM). In this work, we argue that image token masking differs from token masking in text, due to the amount and correlation of tokens in an image. In particular, to generate a challenging pretext task for MIM, we advocate a shift from random masking to informed masking. We develop and exhibit this idea in the context of distillation-based MIM, where a teacher transformer encoder generates an attention map, which we use to guide masking for the student.

We thus introduce a novel masking strategy, called attention-guided masking (AttMask), and we demonstrate its effectiveness over random masking for dense distillation-based MIM as well as plain distillation-based self-supervised learning on classification tokens. We confirm that AttMask accelerates the learning process and improves the performance on a variety of downstream tasks. We provide the implementation code at https://github.com/gkakogeorgiou/attmask.

@conference{C125,

title = {What to Hide from Your Students: Attention-Guided Masked Image Modeling},

author = {Kakogeorgiou, Ioannis and Gidaris, Spyros and Psomas, Bill and Avrithis, Yannis and Bursuc, Andrei and Karantzalos, Konstantinos and Komodakis, Nikos},

booktitle = {Proceedings of European Conference on Computer Vision (ECCV)},

month = {10},

address = {Tel Aviv, Isreal},

year = {2022}

}

Transformers and masked language modeling are quickly being adopted and explored in computer vision as vision transformers and masked image modeling (MIM). In this work, we argue that image token masking differs from token masking in text, due to the amount and correlation of tokens in an image. In particular, to generate a challenging pretext task for MIM, we advocate a shift from random masking to informed masking. We develop and exhibit this idea in the context of distillation-based MIM, where a teacher transformer encoder generates an attention map, which we use to guide masking for the student. We thus introduce a novel masking strategy, called attention-guided masking (AttMask), and we demonstrate its effectiveness over random masking for dense distillation-based MIM as well as plain distillation-based self-supervised learning on classification tokens. We confirm that AttMask accelerates the learning process and improves the performance on a variety of downstream tasks. We provide the implementation code at this https URL.

@article{R36,

title = {What to Hide from Your Students: Attention-Guided Masked Image Modeling},

author = {Kakogeorgiou, Ioannis and Gidaris, Spyros and Psomas, Bill and Avrithis, Yannis and Bursuc, Andrei and Karantzalos, Konstantinos and Komodakis, Nikos},

journal = {arXiv preprint arXiv:2203.12719},

month = {7},

year = {2022}

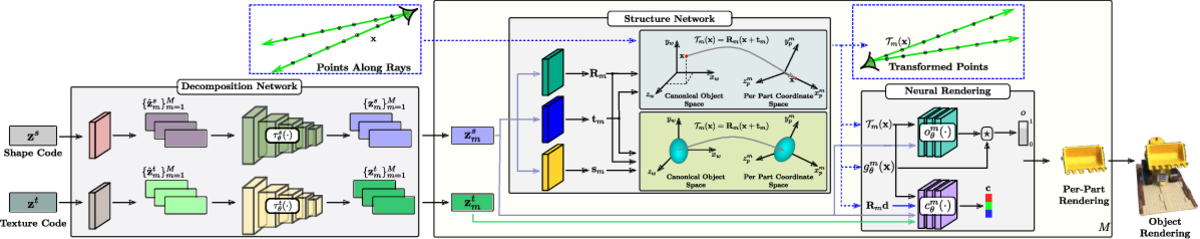

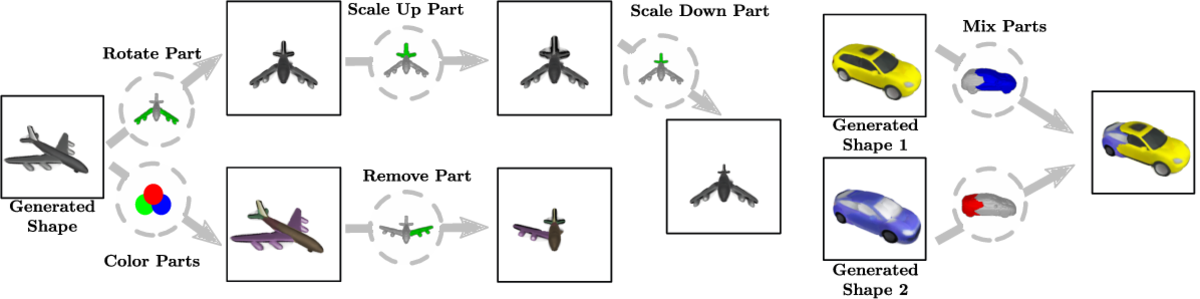

}Long Beach, CA, US Jun 2019